LLM Prompt Engineering for Enterprise: Beyond Basic Templates

Enterprise LLM applications demand sophisticated prompt engineering beyond basic templates. Learn advanced techniques including few-shot learning, chain-of-thought reasoning, structured outputs, and dynamic context injection for production systems.

LLM Prompt Engineering for Enterprise Applications: Beyond Basic Templates

Enterprise LLM applications demand far more sophisticated prompt engineering than basic templates found in tutorials. Production systems require structured approaches that ensure reliability, consistency, and security at scale. While simple prompts work for experimentation, enterprise applications need techniques that handle complex business logic, maintain data integrity, and provide consistent outputs across thousands of requests.

Few-Shot Learning: Teaching Through Examples

Few-shot prompting involves providing the LLM with carefully selected examples that demonstrate the desired behaviour. This technique is particularly powerful for enterprise applications where domain-specific outputs are critical. Unlike zero-shot prompting, few-shot learning guides the model through concrete examples rather than relying solely on instruction.

For enterprise applications, few-shot examples should:

- Represent real business scenarios, not simplified cases

- Include edge cases and error conditions

- Demonstrate the exact format and tone required

- Cover the range of complexity the system will encounter

Consider a financial services application that processes loan applications. Rather than instructing "Extract key information", provide examples showing exactly how to handle incomplete applications, non-standard formats, and regulatory compliance requirements.

Example 1:

Input: Application for business loan, $150K, restaurant, 3 years operation

Output: {

"loan_type": "business",

"amount": 150000,

"industry": "food_service",

"business_age_years": 3,

"risk_category": "standard"

}

Example 2:

Input: Personal loan request, amount not specified, new graduate

Output: {

"loan_type": "personal",

"amount": null,

"industry": null,

"employment_status": "new_graduate",

"risk_category": "requires_review",

"missing_fields": ["loan_amount"]

}

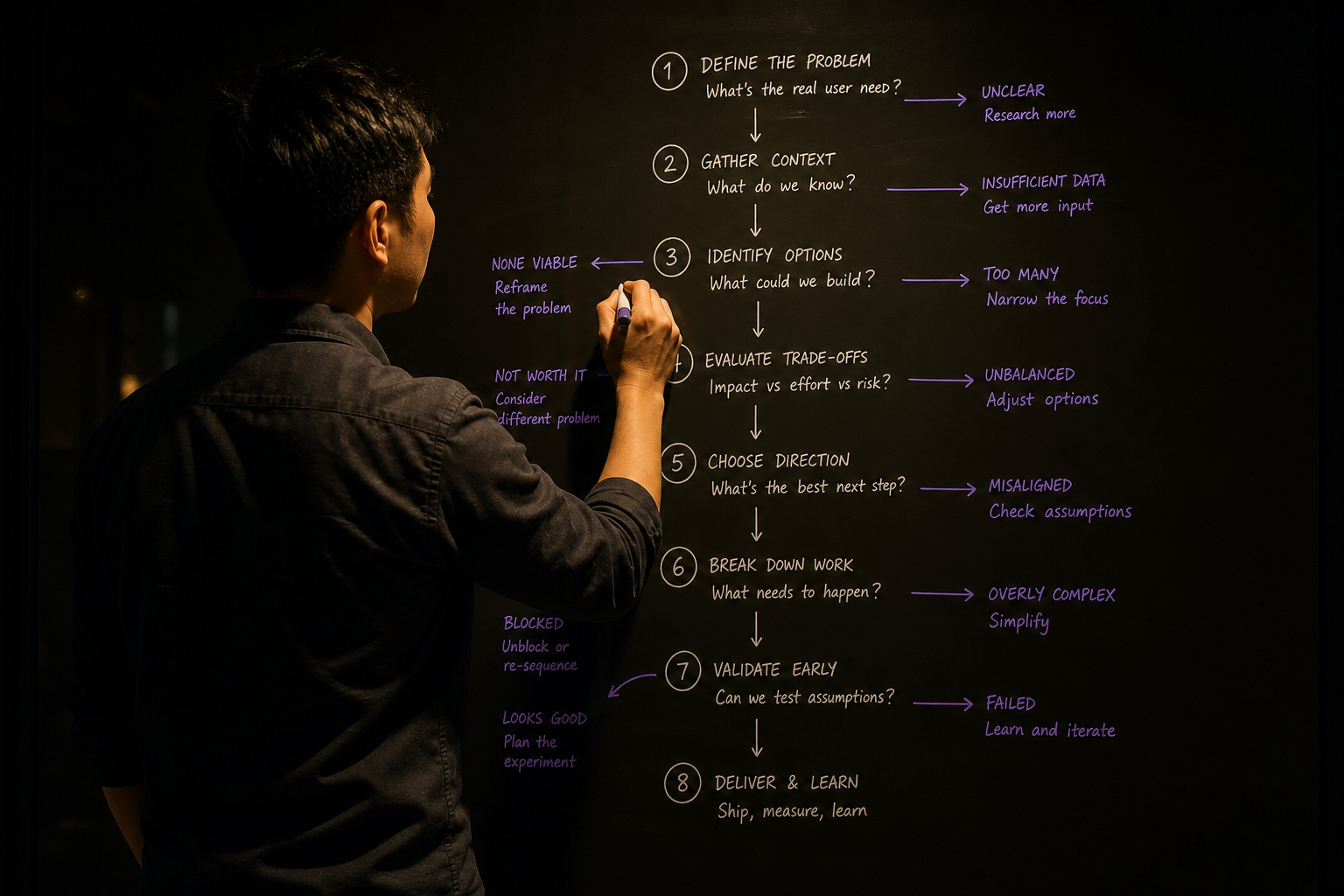

Chain-of-Thought Reasoning for Complex Decisions

Chain-of-thought (CoT) prompting breaks complex reasoning into explicit steps. This technique is essential for enterprise applications where decisions must be auditable and explainable. CoT prompting helps LLMs work through multi-step problems systematically, reducing errors and providing transparency.

Enterprise chain-of-thought prompts should:

- Define clear reasoning steps

- Include decision checkpoints

- Provide audit trails for compliance

- Handle multiple decision paths

For a supply chain application determining inventory reorder points:

Analyse the inventory situation step by step:

1. Current stock level: [X units]

2. Daily usage rate: [Y units/day]

3. Lead time: [Z days]

4. Safety stock requirement: [A units]

Calculation:

- Stock needed during lead time: Y × Z = [result]

- Total reorder point: (Y × Z) + A = [result]

- Days until reorder needed: X ÷ Y = [result]

Decision: [Reorder now / Monitor / No action needed]

Reasoning: [Explain the decision based on calculations]

Structured Output for System Integration

Enterprise LLMs must integrate with existing systems, databases, and APIs. Structured output ensures consistent data formats that downstream systems can process reliably. This goes beyond simple JSON to include validation, error handling, and schema compliance.

Effective structured output techniques include:

- JSON Schema validation

- Enum constraints for categorical data

- Required field enforcement

- Data type validation

- Custom formatting rules

Example structured prompt for customer service ticket classification:

Classify this customer inquiry and return a JSON response matching this schema:

{

"category": "billing" | "technical" | "sales" | "general",

"priority": "low" | "medium" | "high" | "urgent",

"department": "support" | "engineering" | "billing" | "sales",

"estimated_resolution_hours": number,

"requires_escalation": boolean,

"confidence_score": number (0-1)

}

Customer inquiry: [INPUT]

IMPORTANT: Response must be valid JSON only. No additional text.

Implementing Guardrails and Safety Measures

Enterprise applications require robust guardrails to prevent harmful outputs, protect sensitive data, and maintain compliance. Guardrails operate at multiple levels: input validation, output filtering, and behaviour constraints.

Key guardrail categories for enterprise use:

| Guardrail Type | Purpose | Implementation |

|---|---|---|

| Content filtering | Block inappropriate content | Keyword lists, classification models |

| Data protection | Prevent sensitive data exposure | Pattern matching, masking |

| Business rules | Enforce domain constraints | Validation logic, approval workflows |

| Output validation | Ensure format compliance | Schema validation, range checks |

| Rate limiting | Prevent abuse | Request throttling, user quotas |

Implement guardrails through prompt instructions:

BEFORE PROCESSING:

- Do not include any personal identifiers (names, emails, phone numbers)

- Do not make financial recommendations without disclaimer

- Flag any requests for regulated advice

AFTER PROCESSING:

- Validate all dollar amounts are positive numbers

- Ensure dates are in ISO format

- Confirm all required fields are present

Dynamic Context Injection for Personalisation

Dynamic context injection allows LLMs to access relevant information at query time, enabling personalised responses while maintaining data security. This technique is crucial for enterprise applications serving multiple customers with different needs, preferences, and access levels.

Effective dynamic context includes:

- User profile and permissions

- Historical interaction data

- Real-time system status

- Relevant business rules

- Current market conditions

Example dynamic context for a CRM application:

CONTEXT FOR THIS REQUEST:

User: {user.name} (Role: {user.role})

Customer: {customer.name} (Tier: {customer.tier}, Region: {customer.region})

Recent interactions: {recent_interactions}

Account status: {account.status}

Permitted actions: {user.permissions}

Current business rules:

- {applicable_rules}

Now respond to: {user_query}

Production Monitoring and Iteration

Enterprise prompt engineering is not a one-time activity. Production systems require continuous monitoring, performance measurement, and iterative improvement. Successful enterprises establish feedback loops that capture real-world performance and drive prompt refinement.

Monitoring should track:

- Response accuracy and relevance

- Processing time and costs

- User satisfaction scores

- Error rates and types

- Compliance violations

Establish A/B testing frameworks to validate prompt improvements before full deployment. Version control your prompts like code, with clear change logs and rollback capabilities.

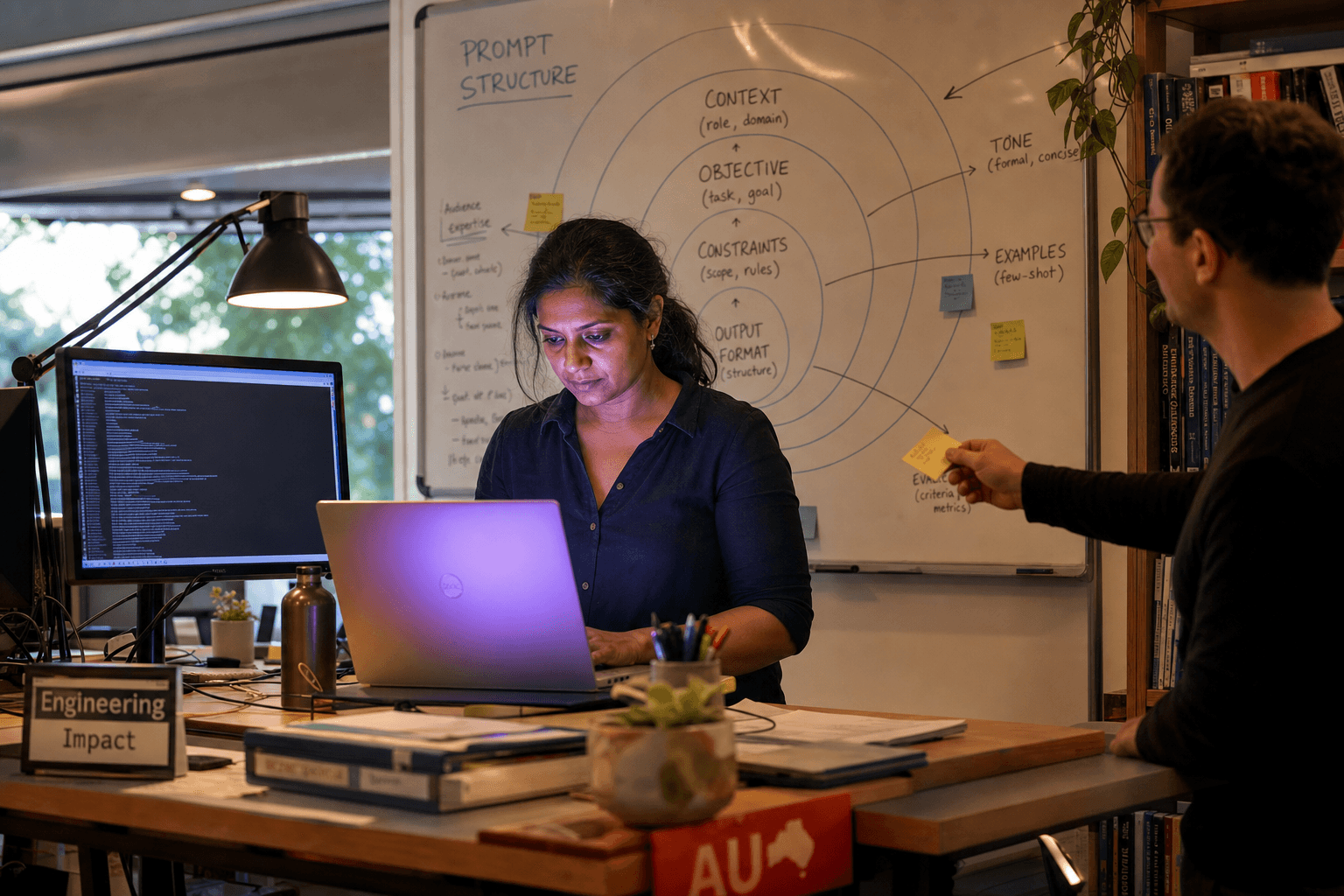

Building Prompt Engineering Capabilities

Successful enterprise LLM implementations require dedicated prompt engineering capabilities within your team. This involves both technical skills and domain expertise to create prompts that truly understand your business context.

Consider developing internal expertise in advanced prompting techniques, or partnering with specialists who understand enterprise requirements. The ai-engineering and ai-product-strategy disciplines are critical for scaling LLM applications beyond prototypes to production systems that deliver real business value.

For more insights on implementing AI in enterprise environments, explore our insights on AI strategy and engineering best practices.

Building production-ready LLM applications requires expertise in advanced prompt engineering, system integration, and enterprise-grade reliability. If you're moving beyond basic AI experiments to production applications, get in touch to discuss how we can help you implement robust prompt engineering practices that scale with your business.

Sarah Mitchell

Principal AI Engineer at Horizon Labs. Specialises in production LLM systems — RAG architectures, fine-tuning pipelines, and the evaluation harnesses that prove a model still works six months after launch. Eight years in machine learning, the last four shipping AI into Australian financial services and healthcare. PhD-level depth, founder-level pragmatism.