AI Model Monitoring in Production: What to Track and How to Alert

Production AI models fail silently through accuracy drift, data changes, and performance degradation. Learn what metrics to track, how to set up effective alerts, and compare monitoring tools to catch problems before they impact your business.

AI Model Monitoring in Production: What to Track and How to Alert

Production AI models fail silently. Unlike traditional software where errors surface immediately, ML models degrade gradually as data changes, accuracy drops, and business metrics drift. By the time you notice revenue impact, it's often too late.

AI model monitoring is the practice of continuously tracking your deployed models' performance, data quality, and business impact to catch problems before they affect customers. Without proper monitoring, you're flying blind with systems that directly impact your bottom line.

This guide covers the essential metrics to track, practical alerting strategies, and tool comparisons to help you build robust ML monitoring for your production systems.

What Makes AI Model Monitoring Different

Traditional software monitoring focuses on uptime, response times, and error rates. ML monitoring adds layers of complexity because models can produce technically valid outputs while being completely wrong for the business context.

![]()

A fraud detection model might maintain 99% uptime and sub-100ms response times while completely missing new fraud patterns. Your infrastructure dashboards show green, but you're losing thousands to undetected fraud.

ML models exist in a feedback loop with real-world data. As user behaviour changes, market conditions shift, or data collection processes evolve, model performance naturally degrades. This isn't a bug—it's the fundamental challenge of deploying statistical models in dynamic environments.

Core Metrics to Track

Model Performance and Accuracy Drift

Accuracy drift occurs when your model's real-world performance deviates from training performance. Track prediction accuracy, precision, recall, and F1 scores where ground truth is available.

For models without immediate feedback loops (like recommendation systems), use proxy metrics. Monitor click-through rates for recommender systems, conversion rates for personalisation models, or user engagement metrics for content ranking algorithms.

Set up sliding window accuracy calculations comparing recent performance against baseline periods. Alert when accuracy drops below acceptable thresholds—typically 5-10% depending on business criticality.

Data Drift Detection

Data drift happens when incoming production data differs from training data distributions. Your model was trained on historical patterns that may no longer represent reality.

Monitor statistical distributions of input features using techniques like:

- Population Stability Index (PSI) for categorical features

- Kolmogorov-Smirnov tests for continuous distributions

- Jensen-Shannon divergence for comparing probability distributions

- Feature correlation changes to detect subtle relationship shifts

Alert when drift scores exceed predetermined thresholds. PSI values above 0.2 typically indicate significant drift requiring investigation.

Prediction Distribution Monitoring

Track the distribution of your model's outputs over time. Sudden changes in prediction patterns often signal upstream data issues or model problems.

For classification models, monitor class distribution changes. If your fraud model suddenly predicts 50% more fraudulent transactions, investigate whether this reflects real fraud increases or model degradation.

For regression models, track prediction variance, outlier frequency, and distribution shape changes.

Infrastructure and Performance Metrics

ML models have unique infrastructure requirements beyond traditional application monitoring:

- Inference latency: Track percentile distributions (P50, P95, P99) not just averages

- Throughput: Requests per second and batch processing rates

- Resource utilisation: GPU memory, CPU usage, and model serving costs

- Error rates: Failed predictions, timeouts, and service unavailability

- Cost per prediction: Track inference costs as model usage scales

Business Impact Metrics

Ultimately, model performance must tie to business outcomes. Connect technical metrics to revenue, user engagement, operational efficiency, or other KPIs your model aims to improve.

For e-commerce recommendation systems, track conversion rates and average order values. For predictive maintenance models, monitor equipment downtime and maintenance cost savings.

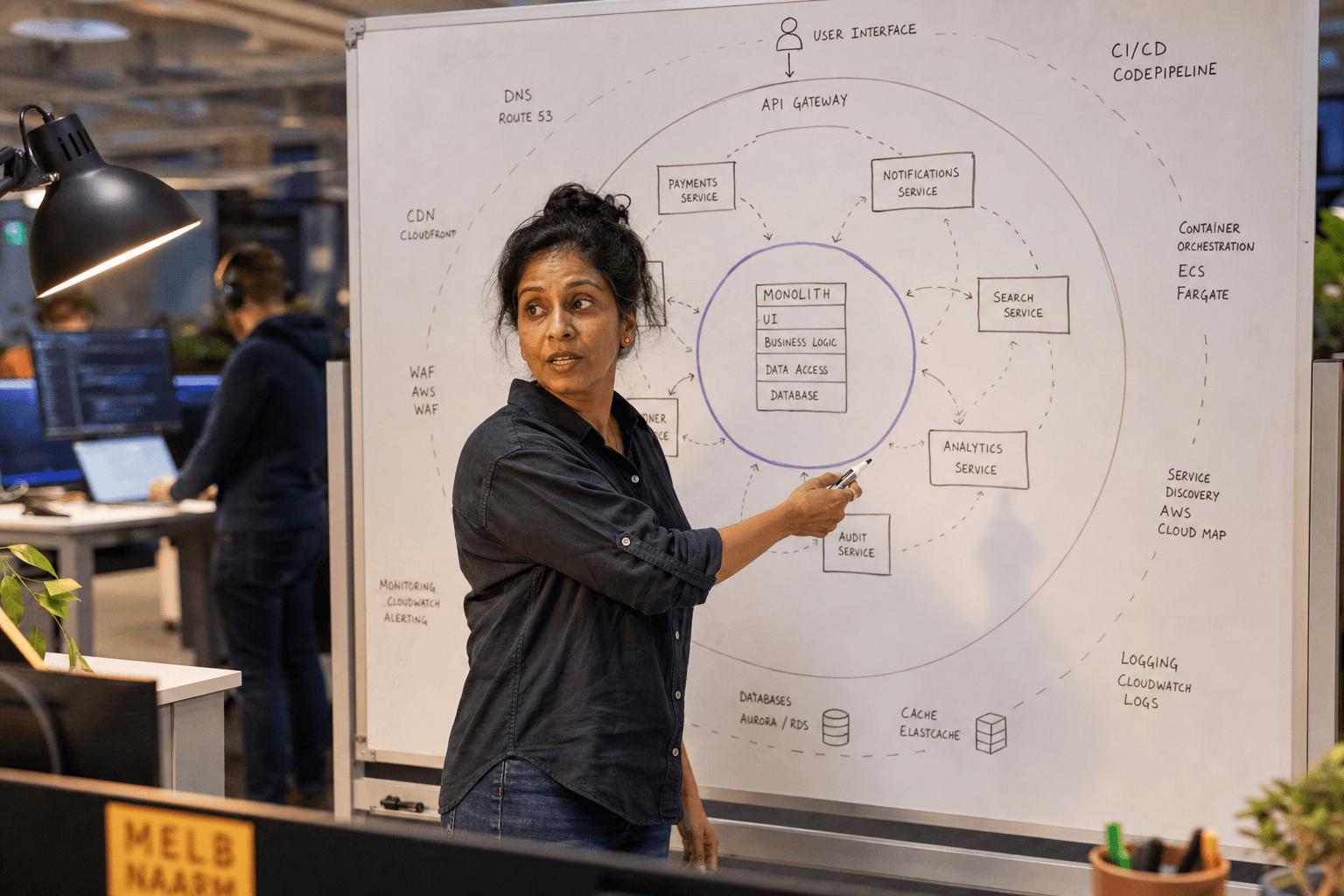

Practical Alerting Strategies

Tiered Alert Severity

![]()

Implement three alert levels based on business impact and urgency:

Critical alerts for immediate business risk: Model serving failures, accuracy drops below minimum viable thresholds, or security incidents. Route to on-call engineers with SMS/phone alerts.

Warning alerts for degrading performance: Moderate accuracy drift, rising latency, or increasing error rates. Send to team Slack channels or email during business hours.

Info alerts for trend monitoring: Gradual data drift, cost increases, or performance pattern changes. Log to monitoring dashboards for weekly review.

Alert Thresholds and Windows

Avoid alert fatigue by setting thresholds based on business tolerance, not statistical significance. A 2% accuracy drop might be statistically significant but business-irrelevant for some models.

Use rolling windows to smooth temporary fluctuations. Alert on sustained degradation over 1-4 hour windows rather than minute-by-minute variations.

Implement alert suppression to prevent notification storms. If accuracy is already below threshold, don't send additional alerts for related metrics until the primary issue is resolved.

Contextual Alerting

Enrich alerts with actionable context. Instead of "Model accuracy dropped to 85%," provide: "Fraud model accuracy dropped to 85% (below 90% threshold) over the last 2 hours. Top contributing factors: new payment processor data, unusual transaction volumes from APAC region."

Include links to investigation playbooks, relevant dashboards, and recent deployment information.

Tool Comparison: Monitoring Platforms

Weights & Biases (W&B)

Best for: Teams already using W&B for experiment tracking who want integrated production monitoring.

Strengths: Seamless integration with existing ML workflows, excellent visualization capabilities, strong data drift detection, and collaborative debugging features.

Limitations: Higher cost for large-scale deployments, primarily focused on ML metrics rather than infrastructure monitoring.

Use case: Mid-market companies with dedicated ML teams who prioritise ML-native tooling over general observability.

Datadog

Best for: Organizations with existing Datadog infrastructure monitoring who want to extend observability to ML models.

Strengths: Unified observability platform, robust alerting and dashboard capabilities, strong APM integration, and comprehensive infrastructure monitoring.

Limitations: ML-specific features less mature than dedicated ML monitoring tools, requires more configuration for advanced drift detection.

Use case: Companies with established DevOps practices who prefer consolidating monitoring tools rather than adding ML-specific solutions.

Custom Solutions

Best for: Organizations with specific monitoring requirements that off-the-shelf tools don't address, or those wanting full control over monitoring infrastructure.

Strengths: Complete customization, integration with existing data pipelines, cost control, and ability to implement domain-specific metrics.

Limitations: Significant development and maintenance overhead, requires dedicated engineering resources, slower time-to-value.

Use case: Large organizations with strong engineering teams and unique monitoring needs that justify custom development investment.

Implementation Best Practices

Start Simple, Scale Gradually

Begin with basic accuracy and latency monitoring before implementing sophisticated drift detection. Many teams over-engineer monitoring systems that never get used because they're too complex to maintain.

Focus on metrics that directly impact business outcomes. If you can't explain why a metric matters to your product manager, don't monitor it.

Integrate with Existing Workflows

ML monitoring shouldn't exist in isolation. Connect alerts to your incident management system, link monitoring dashboards to your existing observability stack, and ensure alerts reach the right team members.

Use monitoring data to inform model retraining schedules. When drift scores exceed thresholds, trigger automated retraining pipelines or create tickets for manual model updates.

Document Alert Responses

Create runbooks for common alert scenarios. When accuracy drops, what steps should engineers take? When data drift is detected, how do you determine if it's a data quality issue or legitimate distribution change?

Document false positive patterns to refine alert thresholds over time. Track alert frequency and resolution times to identify monitoring system improvements.

The Cost of Poor Monitoring

Unmonitored AI models create hidden business risks. A recommendation engine with degraded performance might reduce conversion rates by 15% over months before anyone notices. A pricing model with data drift might systematically underprice products, eroding margins.

The cost of robust monitoring is typically 5-10% of your ML infrastructure spend. The cost of production model failures—revenue loss, customer churn, and engineering time to debug issues—often exceeds monitoring costs by 10x or more.

Production AI systems require production-grade monitoring. Treat ML monitoring as essential infrastructure, not optional tooling.

Building Your Monitoring Strategy

Effective AI model monitoring requires balancing technical depth with operational simplicity. Start with business-critical models, implement basic performance and accuracy tracking, then gradually add sophisticated drift detection and business impact metrics.

Remember that monitoring is only valuable if it leads to action. Design alerts that provide clear next steps and connect monitoring insights to your model improvement processes.

If you're building production AI systems and need guidance on monitoring strategy, our AI engineering team helps mid-market companies implement robust ML monitoring that scales with their business. We can assess your current monitoring gaps and design practical solutions that fit your team's capabilities. Get in touch to discuss your monitoring requirements.

Chris Kerr

Founder of Horizon Labs. Twenty years building production software for Australian mid-market businesses, the last seven focused on putting AI into systems that operate at 3am without anyone watching. Writes about strategy, fractional CTO work, and the operational discipline that separates AI demos from AI products.