AI UX Design: How to Design Interfaces That Users Actually Trust

AI UX design requires fundamentally different approaches than traditional software interfaces. Learn how to build user trust through confidence indicators, transparency, and seamless human-AI collaboration workflows.

AI UX Design: How to Design Interfaces That Users Actually Trust

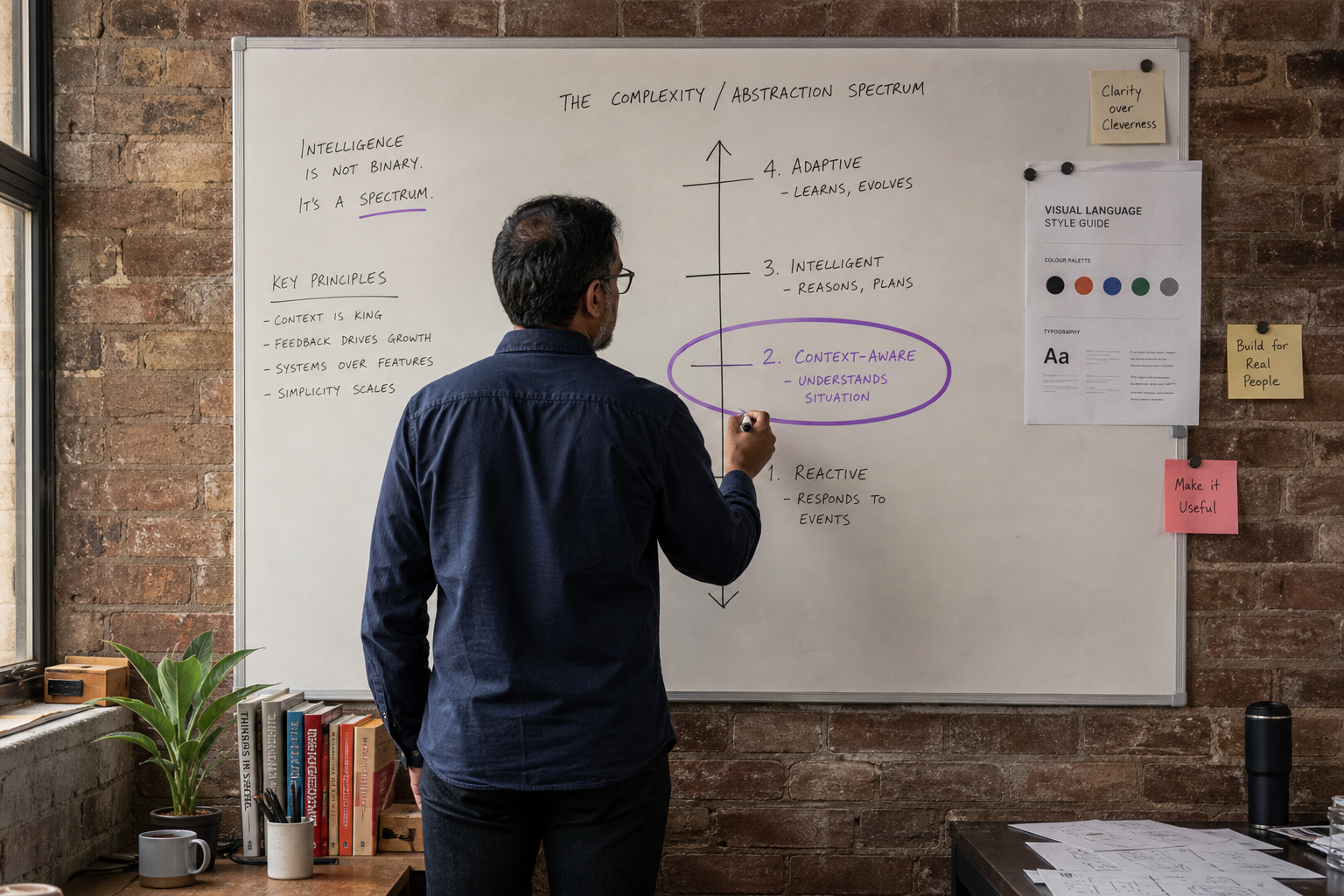

AI UX design is the practice of creating user interfaces that make artificial intelligence systems transparent, trustworthy, and usable for humans. Unlike traditional software design, AI interfaces must communicate uncertainty, explain decisions, and maintain user agency when algorithms make mistakes or operate beyond their training boundaries.

Building trust in AI systems isn't just about making them accurate—it's about designing interfaces that help users understand when to trust the AI, when to question it, and when to take control. This becomes critical as AI moves from experimental tools to production systems handling real business decisions.

Why Traditional UX Patterns Fail for AI Products

Traditional UX design assumes deterministic outcomes—click a button, get a predictable result. AI systems operate differently. They make probabilistic decisions, can fail in unexpected ways, and often work with incomplete information. This fundamental difference breaks many established UX patterns.

Consider a standard form validation pattern. Traditional software shows clear error states: "Email format invalid." But what happens when an AI system flags a customer inquiry as potentially fraudulent with 67% confidence? The binary pass/fail pattern doesn't work.

Australian fintech companies learned this lesson during the Banking Royal Commission fallout. Banks implementing AI for loan decisions found that traditional approve/reject interfaces created compliance risks. When algorithms couldn't explain their reasoning in plain language, human reviewers couldn't validate decisions—creating both regulatory and user trust problems.

The Uncertainty Problem

AI systems deal in probabilities, not certainties. A recommendation engine might suggest three equally valid solutions. A computer vision system might identify an object with 85% confidence. Traditional interfaces don't have patterns for communicating this uncertainty effectively.

Research from Melbourne University's HCI lab shows users prefer AI systems that communicate uncertainty clearly rather than systems that appear confident but occasionally fail dramatically. Users develop better mental models when interfaces show confidence levels, alternative interpretations, and decision boundaries.

Core Principles of Trustworthy AI UX Design

1. Communicate Confidence Explicitly

Users trust AI systems more when they understand the system's confidence in its outputs. This means designing interfaces that show not just what the AI decided, but how certain it is about that decision.

Effective confidence indicators go beyond simple percentage scores. They provide contextual information about what affects confidence levels:

- Data quality indicators: "Based on 47 similar customer cases"

- Boundary conditions: "Confidence decreases for purchases over $10,000"

- Temporal factors: "This prediction is most accurate within 30 days"

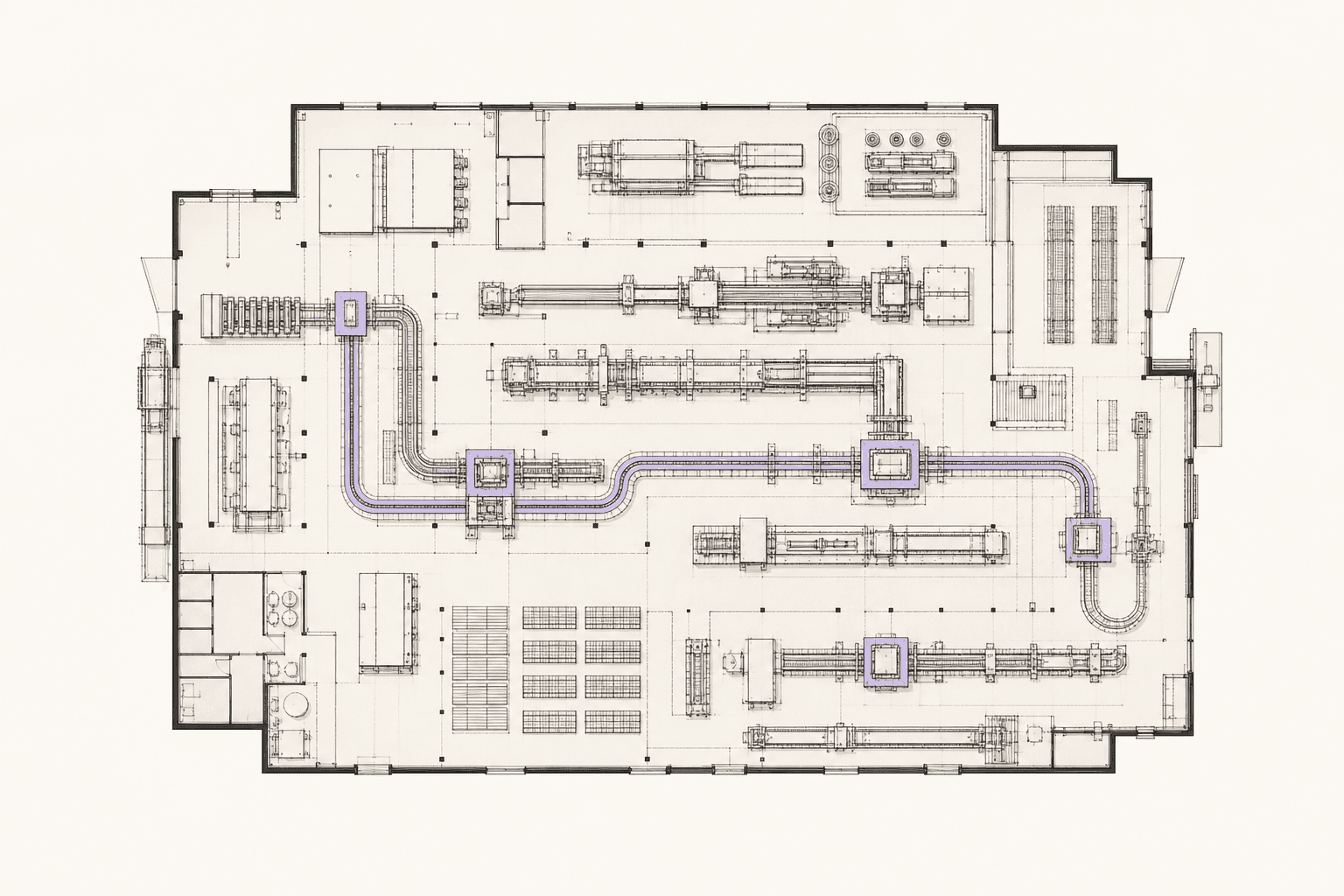

A logistics AI platform we built for a Melbourne distribution company shows confidence levels using colour-coded delivery predictions. Green indicates high confidence (>90%), yellow shows moderate confidence (70-90%), and orange flags low confidence (<70%) with explanations like "Weather data incomplete for this route."

2. Design for Graceful Degradation

AI systems fail differently than traditional software. Instead of complete system crashes, they might gradually become less accurate or confident. Your interface design must account for this graceful degradation.

This involves creating fallback patterns that maintain functionality as AI confidence decreases:

| Confidence Level | Interface Response | User Action Available |

|---|---|---|

| High (>90%) | Direct recommendation | Accept/reject |

| Medium (70-90%) | Multiple options shown | Choose from alternatives |

| Low (<70%) | Manual override suggested | Full manual control |

The key is matching interface complexity to AI confidence. When the system is certain, streamline the interface. When uncertainty increases, provide more user control and context.

3. Enable Progressive Disclosure of AI Reasoning

Users need different levels of detail about AI decisions depending on their role, expertise, and the decision's impact. Progressive disclosure lets users drill down from high-level summaries to detailed explanations as needed.

A three-tier explanation structure works well:

- Surface level: "Flagged for review: unusual transaction pattern"

- Reasoning level: "Amount 40% higher than typical, new vendor, outside business hours"

- Technical level: "Random Forest model, features: amount_zscore=2.1, vendor_age=0, time_anomaly=0.87"

This approach serves both non-technical users who need quick context and technical users who need full decision transparency for debugging or compliance.

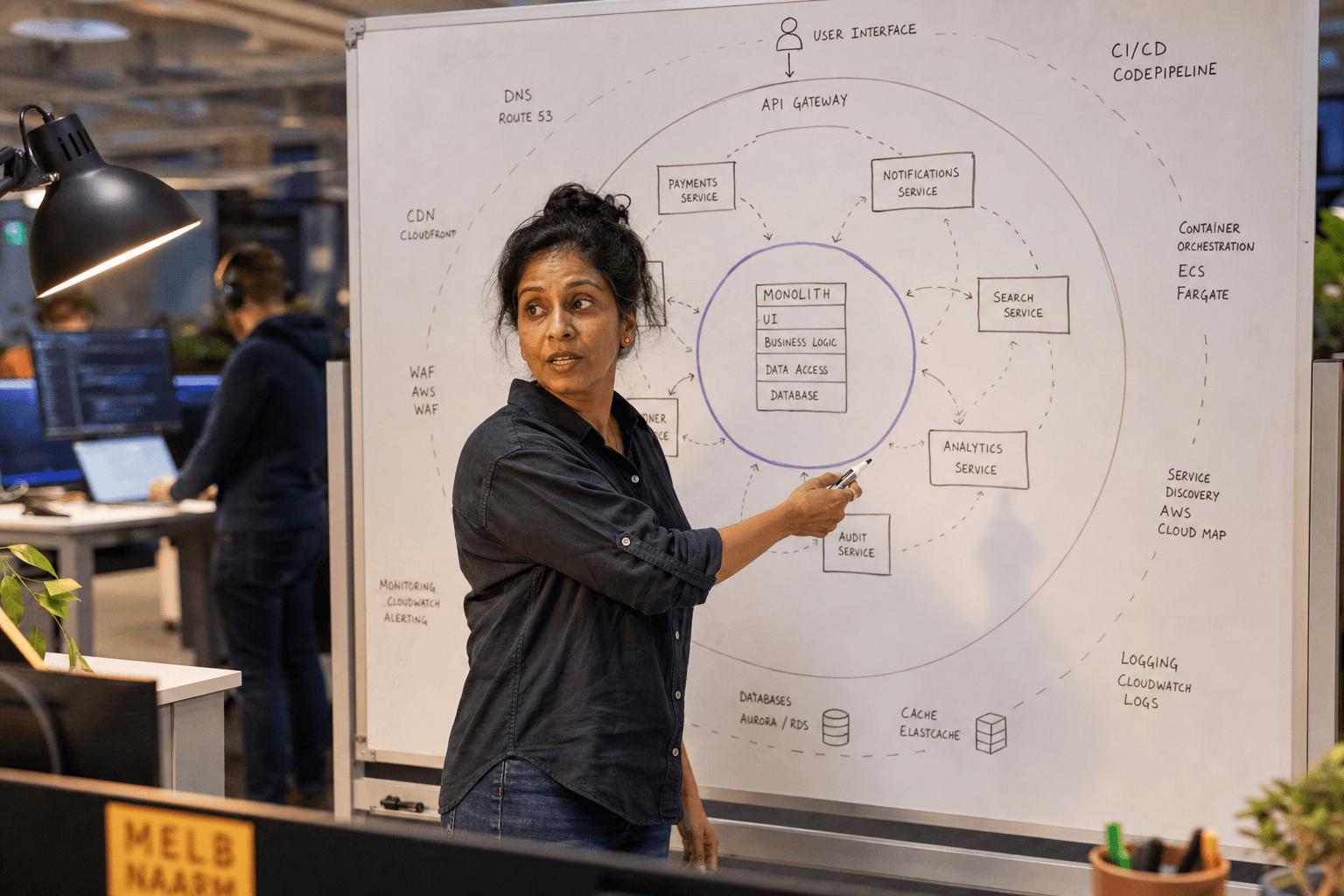

Human-in-the-Loop Workflow Design

Human-in-the-loop (HITL) workflows are essential for AI systems handling high-stakes decisions. The UX challenge is designing handoffs between AI and humans that maintain context, preserve decision history, and enable smooth escalation.

Seamless Escalation Patterns

When AI confidence drops below acceptable thresholds, the system should seamlessly escalate to human oversight. This escalation must preserve all relevant context and decision history.

Effective escalation interfaces include:

- Complete decision trail: What the AI considered and why

- Relevant data highlights: Key factors influencing the decision

- Suggested next actions: Based on similar historical cases

- Confidence boundaries: When to trust vs. verify AI recommendations

An inventory management system we designed for a Perth mining supplier includes escalation workflows for procurement decisions. When AI recommends unusual supplier changes, the interface packages all decision context for human review, including price comparisons, supplier history, and risk factors.

Feedback Loop Integration

Users must be able to correct AI decisions easily, and these corrections should improve future performance. This requires designing feedback mechanisms that capture not just what was wrong, but why it was wrong.

Good feedback interfaces allow users to:

- Mark predictions as correct/incorrect

- Explain the reasoning behind corrections

- Indicate confidence in their own judgment

- Suggest alternative interpretations

This feedback becomes training data for improving AI models, creating a virtuous cycle between user trust and system performance.

Designing for AI Transparency

Show the System's Limitations

Counter-intuitively, showing AI limitations increases user trust. When users understand what the system cannot do, they develop more accurate expectations and trust the system more within its known capabilities.

Transparency mechanisms include:

- Training data descriptions: "This model trained on Australian retail data from 2020-2024"

- Known blind spots: "Accuracy decreases for products launched within 30 days"

- Update frequencies: "Model retrained monthly with new sales data"

- Performance metrics: "Currently achieving 87% accuracy on similar predictions"

Real-Time Performance Indicators

Users should understand how well the AI system is currently performing, not just its historical accuracy. This helps them calibrate trust based on current conditions.

A customer service AI we built includes a live performance dashboard showing:

- Current response accuracy (updated hourly)

- Known issues affecting performance

- When the system was last updated

- Performance comparisons to human agents

This transparency helps customer service managers understand when to rely on AI recommendations versus human judgment.

Common AI UX Anti-Patterns to Avoid

1. False Precision

Showing AI confidence as precise percentages ("87.3% confident") creates false precision. Most AI models aren't calibrated to this level, making specific percentages misleading.

Better approaches use confidence bands ("High/Medium/Low") or ranges ("80-90% confident") that more accurately represent the system's actual precision.

2. Black Box Decisions

Presenting AI outputs without any explanation reduces trust and prevents users from catching errors. Even simple explanations like "Based on recent purchase history" help users validate recommendations.

3. Anthropomorphizing the System

Describing AI systems using human terms ("The AI thinks...", "Sarah the Assistant believes...") creates false expectations about the system's capabilities and consciousness. Use neutral language that accurately describes computational processes.

4. Binary Trust Assumptions

Designing interfaces that assume users either fully trust or completely distrust AI misses the nuanced reality of human-AI interaction. Users develop conditional trust based on context, accuracy, and stakes.

Implementation Guidelines for Australian Businesses

When implementing AI UX design in Australian contexts, consider specific regulatory and cultural factors:

Privacy and Transparency Requirements

The Privacy Act 1988 and upcoming AI regulation require explainability for automated decision-making. Your AI interfaces must provide clear explanations that satisfy both user needs and regulatory requirements.

Design explanation systems that can generate both user-friendly summaries and detailed compliance reports from the same underlying decision data.

Cultural Considerations

Australian users typically prefer straightforward communication over marketing-speak. AI interfaces should use clear, direct language about capabilities and limitations. Avoid Silicon Valley-style hype about AI capabilities.

Accessibility Requirements

Ensure AI interfaces meet WCAG 2.1 AA standards. This includes providing alternative text for AI-generated visual content and ensuring all AI decision information is available to screen readers.

Measuring Trust in AI Interfaces

Trust in AI systems can be measured through both quantitative and qualitative metrics:

Quantitative metrics:

- Task completion rates when AI recommendations are available

- Frequency of user overrides of AI decisions

- Time spent reviewing AI explanations

- User retention in AI-powered workflows

Qualitative indicators:

- User confidence surveys after AI interactions

- Feedback quality and frequency

- Support ticket content related to AI decisions

- User interviews about AI trust factors

Regular measurement helps identify trust issues before they impact business outcomes or user satisfaction.

Successful AI UX design requires close collaboration between design, engineering, and product strategy teams. Our AI product strategy services help define user experience requirements that balance technical capabilities with user needs, while our AI engineering team ensures the underlying systems can support transparent, explainable interfaces. For organizations looking to integrate AI UX principles into existing products, our application modernisation approach redesigns legacy interfaces to accommodate AI-driven features and workflows.

Building Trust Through Iterative Design

Trustworthy AI UX emerges through iteration based on real user behaviour and feedback. Start with high-transparency interfaces that show more context than users might initially need. As trust builds and users develop better mental models, you can streamline interfaces while maintaining essential transparency.

The goal isn't to make AI invisible—it's to make AI understandable, reliable within known bounds, and genuinely useful for human decision-making. When users understand what AI can and cannot do, when they can predict how it will behave, and when they maintain appropriate control, trust follows naturally.

AI UX design requires rethinking fundamental assumptions about human-computer interaction. By focusing on transparency, appropriate trust calibration, and seamless human-AI collaboration, you can build interfaces that users actually want to use—and that deliver real business value.

Ready to design trustworthy AI experiences for your users? Start a conversation about how human-centred AI design can improve your product outcomes.

Chris Kerr

Founder of Horizon Labs. Twenty years building production software for Australian mid-market businesses, the last seven focused on putting AI into systems that operate at 3am without anyone watching. Writes about strategy, fractional CTO work, and the operational discipline that separates AI demos from AI products.