Scaling AI Pilots: From Proof of Concept to Production

Most AI pilots never make it to production due to the significant gap between controlled proof-of-concept environments and production reality. This guide explores the four key gaps that prevent scaling and provides a structured approach to bridge them.

What Happens After the AI Pilot? Scaling from Proof of Concept to Production

Your AI pilot worked. The demo impressed stakeholders. The proof of concept showed promise. But now comes the hard part: getting from notebook to production.

Most AI pilots never make it to production. While organisations are eager to experiment with AI, moving from successful proof of concept to production deployment remains one of the biggest challenges in enterprise AI adoption.

Why AI Pilots Fail to Scale

AI pilots fail to scale because they operate in controlled environments with clean data and simplified assumptions that do not reflect production reality. The gap between a working demo and a production system is substantial.

The pilot environment is forgiving. Data is curated, edge cases are ignored, and performance is measured under ideal conditions. Production is unforgiving. Data is messy, users behave unpredictably, and systems must handle failure gracefully.

The Demo vs Production Reality

Pilot projects typically work with carefully selected datasets and simplified scenarios. They prove that the technology can work under controlled conditions but often overlook the complexity of real-world deployment.

Production environments require handling diverse data sources, managing multiple user types and workflows, processing adversarial or unexpected inputs, implementing automated monitoring and alerting, building enterprise-grade reliability, and ensuring engineering teams can maintain what data scientists built.

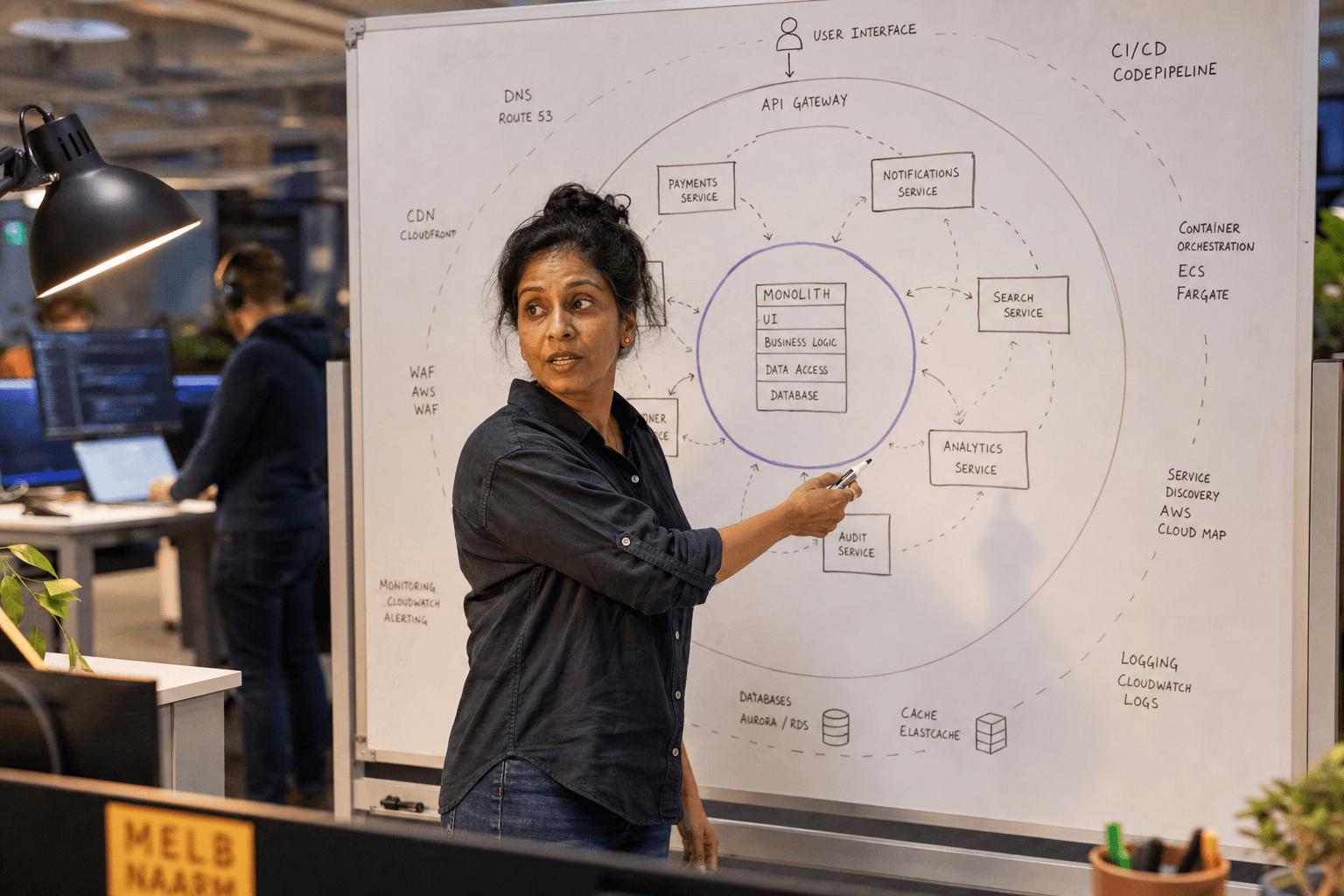

The Four Production Readiness Gaps

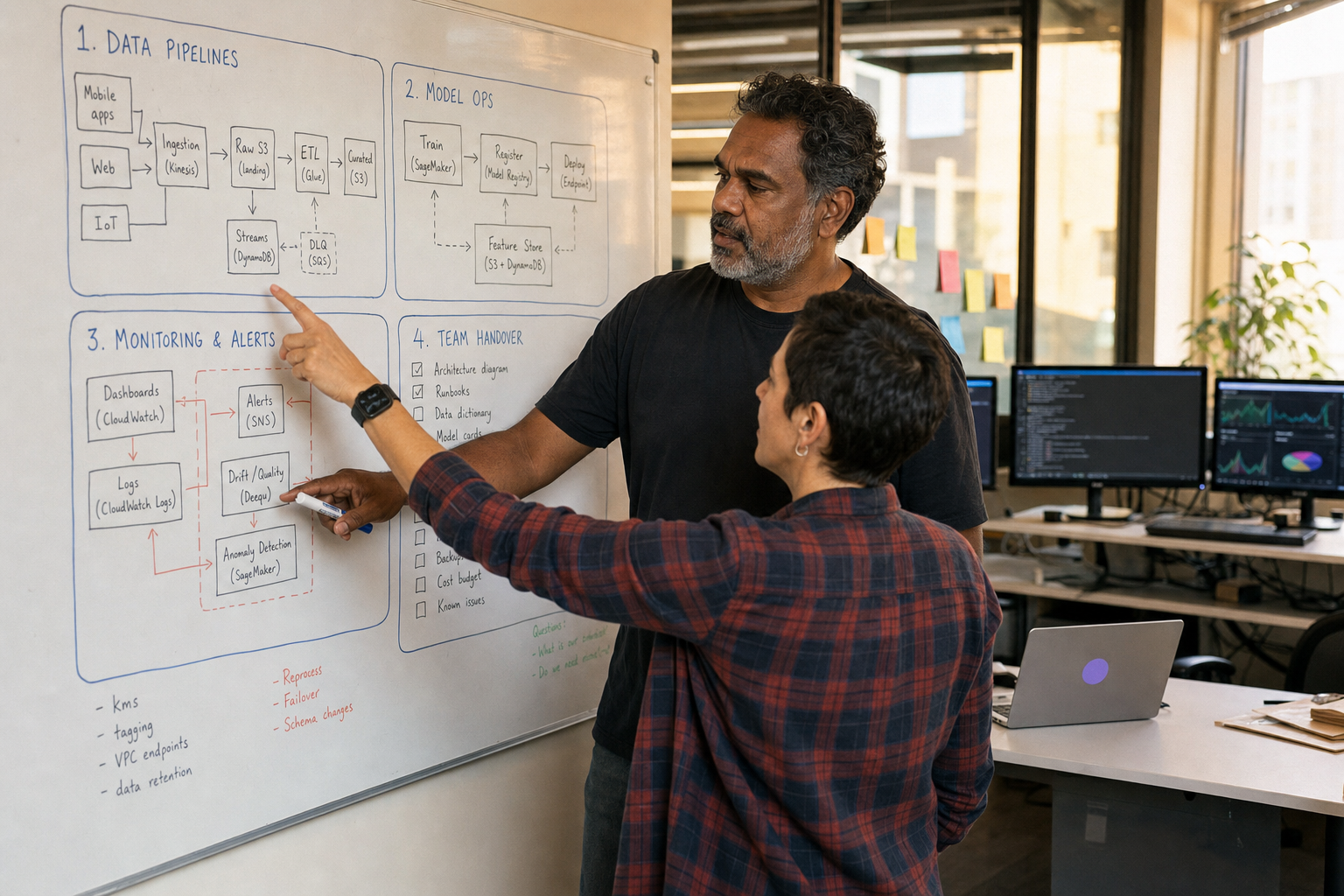

1. Data Infrastructure Gap

Pilots often run on CSV files or database exports. Production AI needs real-time data pipelines that can handle volume, velocity, and variety.

Your pilot might work with carefully selected records. Production needs to process large volumes daily, with data arriving from multiple systems, in different formats, with varying quality levels.

Building robust data infrastructure means implementing data validation, transformation pipelines, and quality monitoring. It means handling schema changes, managing data lineage, and ensuring compliance with data governance policies.

2. Model Operations Gap

A model that works in Jupyter notebooks needs to become a service that other systems can call reliably. This requires containerisation, API development, version management, and deployment automation.

MLOps is not just DevOps for models. It includes model versioning, A/B testing frameworks, performance monitoring, and automated retraining pipelines. Production models need to detect when their performance degrades and trigger appropriate responses.

3. Monitoring and Observability Gap

Pilots are monitored manually by data scientists. Production systems need automated monitoring that tracks model performance, data quality, system health, and business metrics.

This includes detecting data drift (when input data changes), concept drift (when the relationship between inputs and outputs changes), and performance degradation. Production monitoring must alert teams before problems affect users.

4. Team Handover Gap

Data scientists build pilots. Engineering teams maintain production systems. This handover often fails because the systems, tools, and processes are different.

Production AI systems must be maintainable by engineers who did not build the original model. This requires documentation, standardised architectures, and operational procedures that fit existing engineering practices.

What Production-Ready AI Actually Requires

Technical Infrastructure

Production AI needs enterprise-grade infrastructure. This includes container orchestration, load balancing, auto-scaling, and disaster recovery. The system must handle traffic spikes, service failures, and maintenance windows.

Security becomes critical. Production systems need authentication, authorisation, audit logging, and compliance with regulatory requirements. API keys, model weights, and training data must be protected.

Data Engineering

Production requires robust data pipelines that can ingest, validate, transform, and serve data reliably. These pipelines must handle late-arriving data, duplicate records, and system outages gracefully.

Data quality monitoring becomes essential. Production systems need to detect when data quality degrades and take appropriate action, whether that is alerting operators or falling back to alternative approaches.

Model Lifecycle Management

Production models need versioning, testing, and deployment processes. Teams must be able to roll back to previous versions, test new models against baselines, and deploy updates without downtime.

This includes managing multiple model versions simultaneously, routing traffic between models for A/B testing, and maintaining model registries that track lineage and performance.

The Cost Reality Check

Pilots are relatively inexpensive to run. Production systems have ongoing operational costs that many organisations underestimate.

Compute costs scale with usage. Infrastructure requirements grow significantly when moving from prototype to production scale, particularly for models requiring GPU acceleration or high-throughput processing.

Operational overhead includes monitoring tools, infrastructure management, security compliance, and engineering time for maintenance and updates. These costs compound over time and often exceed initial estimates.

From Pilot to Production: A Structured Approach

Phase 1: Production Readiness Assessment

Before scaling any AI pilot, conduct a thorough assessment of production requirements. This includes technical architecture review, data pipeline design, and operational requirements planning.

Identify the gaps between pilot and production environments. Map out the additional infrastructure, processes, and team capabilities needed for success.

Phase 2: Foundation Building

Build the infrastructure foundations before deploying the AI model. This includes data pipelines, monitoring systems, and deployment automation.

This phase typically takes longer than expected but is essential for sustainable production deployment. Rushing this step leads to technical debt that becomes expensive to resolve later.

Phase 3: Production Deployment

Deploy the AI model using a phased approach. Start with limited traffic, monitor performance closely, and gradually increase load as confidence builds.

Implement proper monitoring and alerting from day one. Production issues are easier to resolve when you can detect them quickly and understand their root causes.

Phase 4: Continuous Improvement

Production AI is not a deployment event — it is an ongoing process. Models need regular evaluation, data pipelines require maintenance, and infrastructure must evolve with business requirements.

Establish regular review cycles for model performance, data quality, and system reliability. Plan for model retraining, feature updates, and infrastructure scaling.

The Australian Context

Australian organisations face specific challenges when scaling AI to production. Data sovereignty requirements may limit cloud deployment options. Privacy legislation affects how customer data can be processed and stored.

Skills shortage in AI engineering means many organisations lack the internal capability to bridge the pilot-to-production gap. This is where partnering with experienced AI engineering teams becomes valuable.

Working with External Partners

Many organisations find that scaling AI pilots requires capabilities they do not have in-house. This includes MLOps expertise, data engineering skills, and production AI architecture knowledge.

The right partner helps bridge the gap between data science pilots and production systems. They bring experience from similar deployments and can accelerate time to production while building internal capability.

Look for partners who focus on production deployment, not just pilot development. The skills required are different, and experience with enterprise AI deployment matters significantly.

Getting Your AI Pilot to Production

Scaling AI from pilot to production requires systematic planning, proper infrastructure, and realistic expectations about complexity and cost.

The organisations that succeed treat production deployment as a distinct project requiring different skills, tools, and processes than pilot development. They invest in proper foundations and plan for ongoing operational requirements.

Most importantly, they recognise that production AI is an engineering discipline that requires collaboration between data scientists and engineering teams.

If you have a successful AI pilot and need help scaling to production, we specialise in bridging this gap. Our AI product strategy and engineering teams work with mid-market organisations to turn promising pilots into production systems that deliver business value.

Ready to discuss your production AI requirements? Get in touch to start the conversation.

Chris Kerr

Founder of Horizon Labs. Twenty years building production software for Australian mid-market businesses, the last seven focused on putting AI into systems that operate at 3am without anyone watching. Writes about strategy, fractional CTO work, and the operational discipline that separates AI demos from AI products.