AI Governance for Australian Businesses: What You Need to Know in 2026

AI governance is shifting from optional to essential for Australian mid-market companies. With evolving regulations and growing compliance pressures, establishing proper frameworks for safe, ethical AI operations has become a business imperative.

AI Governance for Australian Businesses: What You Need to Know in 2026

AI governance is the framework of policies, processes, and controls that ensure artificial intelligence systems operate safely, ethically, and in compliance with applicable laws. As AI adoption accelerates across Australian mid-market companies, establishing proper governance has shifted from optional to essential.

The regulatory landscape is evolving rapidly. While Australia doesn't yet have comprehensive AI-specific legislation like the EU's AI Act, 2026 brings new compliance pressures from multiple directions: updated privacy laws, industry-specific regulations, and growing expectations from customers, investors, and partners.

Australia's Evolving AI Regulatory Landscape

Australia's approach to AI regulation is taking shape through sector-specific requirements rather than a single comprehensive law. The Australian Government's AI Ethics Framework, while voluntary, provides the foundation most organisations are building upon.

Key regulatory developments affecting Australian businesses include updates to the Privacy Act 1988, with automated decision-making provisions requiring explanation rights for individuals. The Australian Competition and Consumer Commission (ACCC) is also scrutinising AI systems for potential consumer harm and competitive impacts.

Industry-specific regulators are developing AI-related guidance. Financial institutions are expected to manage AI risks within existing operational risk frameworks. Healthcare organisations using AI for clinical purposes must consider therapeutic goods regulations and medical device guidelines.

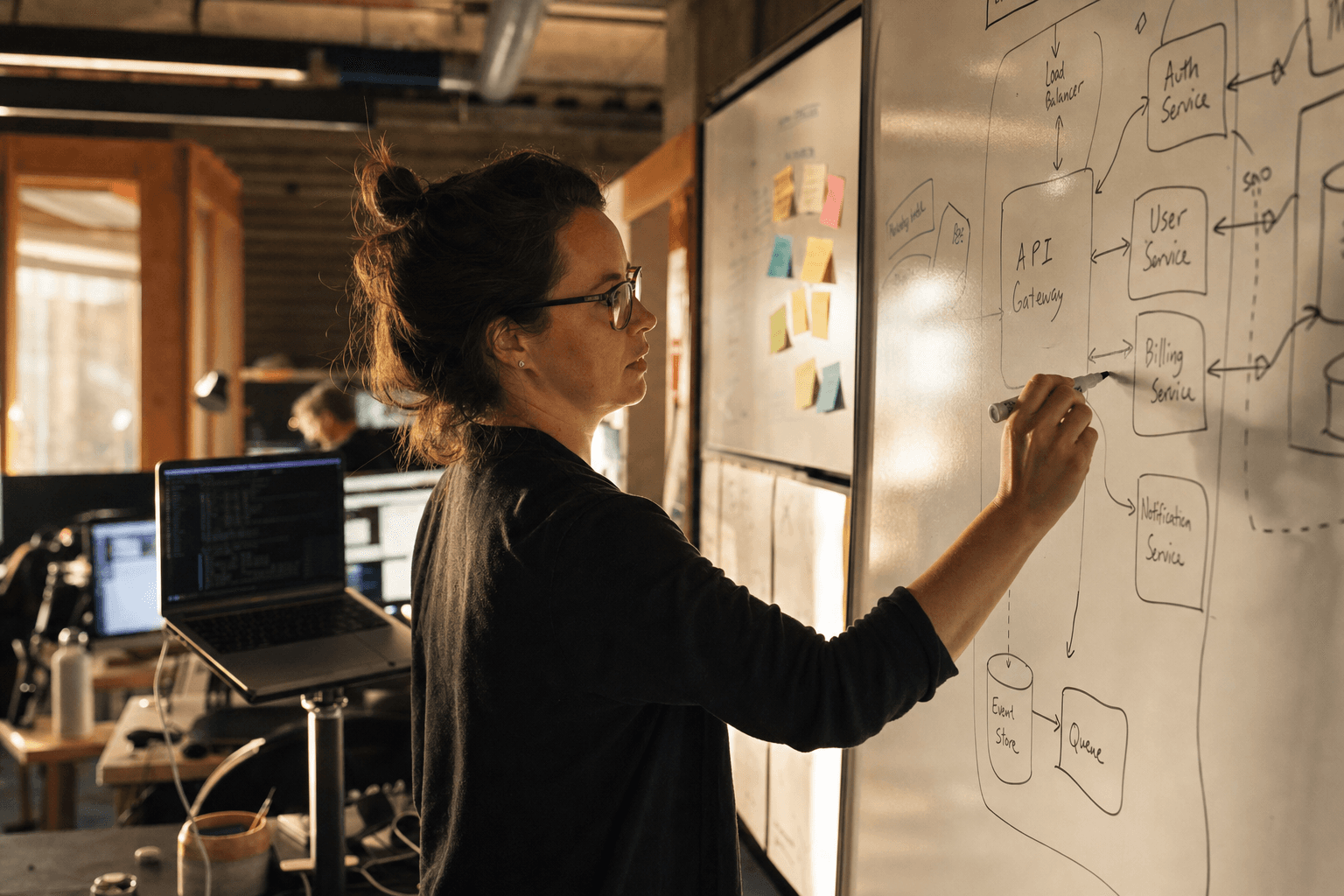

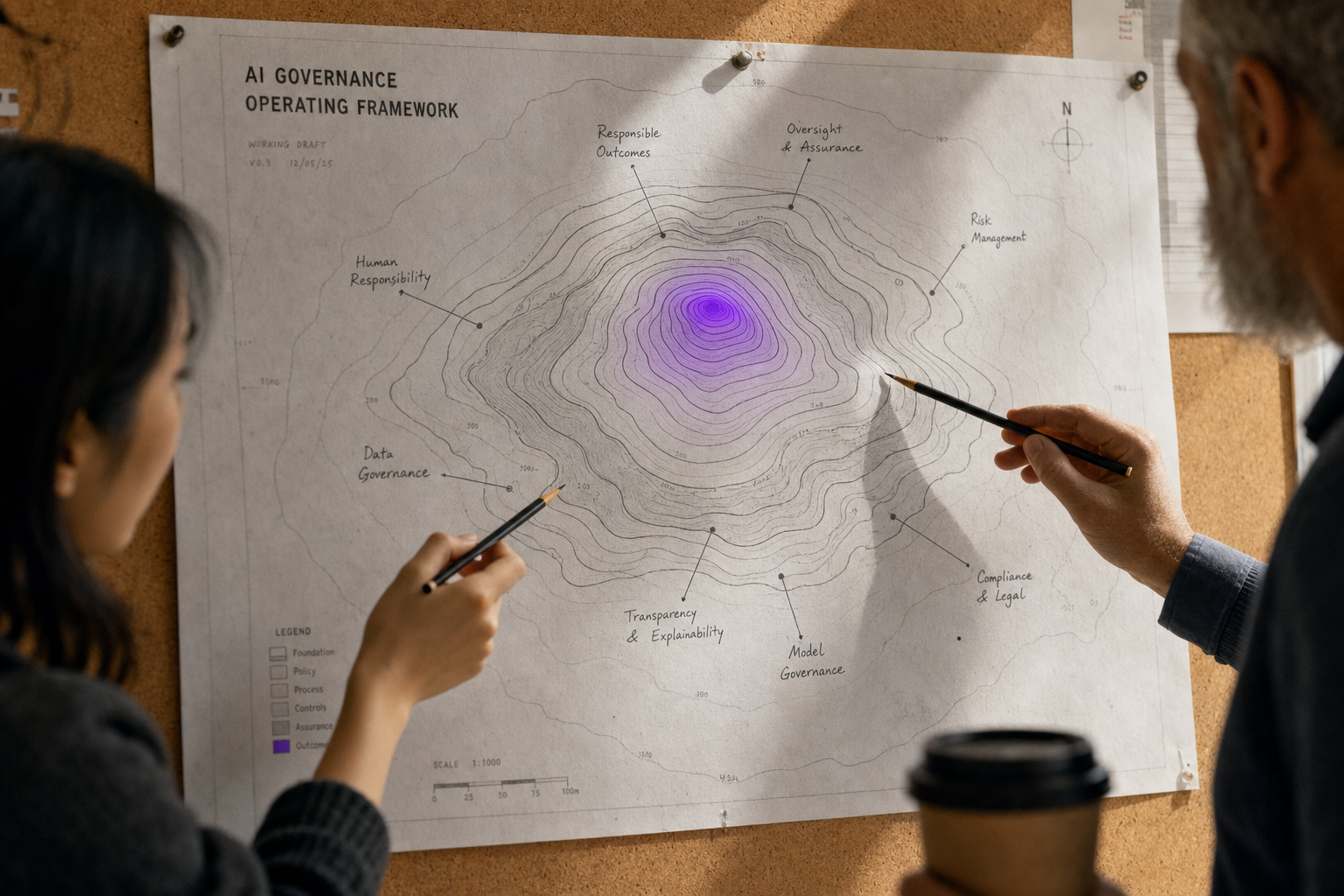

Essential Components of Responsible AI Frameworks

A responsible AI framework addresses eight core principles: human-centred AI, fairness, privacy protection, reliability and safety, transparency and explainability, contestability, accountability, and human oversight.

Risk Assessment and Classification forms the foundation. Not all AI systems carry equal risk. A chatbot for customer FAQ has different implications than a system making credit decisions. Classification helps prioritise governance efforts where they matter most.

Organisations typically categorise AI systems into risk tiers based on their potential impact on individuals and business operations. High-risk systems generally include those making consequential decisions about people, while low-risk systems might handle routine data processing tasks.

Data Governance Integration ensures AI systems inherit strong data practices. This includes data quality controls, lineage tracking, and ensuring training data doesn't introduce harmful biases. Your data infrastructure becomes the foundation for trustworthy AI.

Model Documentation and Versioning creates audit trails. Document training data sources, model architecture decisions, performance metrics, and known limitations. Version control for models mirrors software development best practices.

Audit Requirements and Compliance Obligations

Audit requirements vary by industry and AI system risk level, but common elements are emerging across sectors. Financial services organisations must demonstrate AI systems comply with existing credit and insurance regulations, including anti-discrimination requirements.

Healthtech companies using AI for diagnostic or treatment recommendations face software as medical device requirements, including clinical evidence and post-market surveillance considerations.

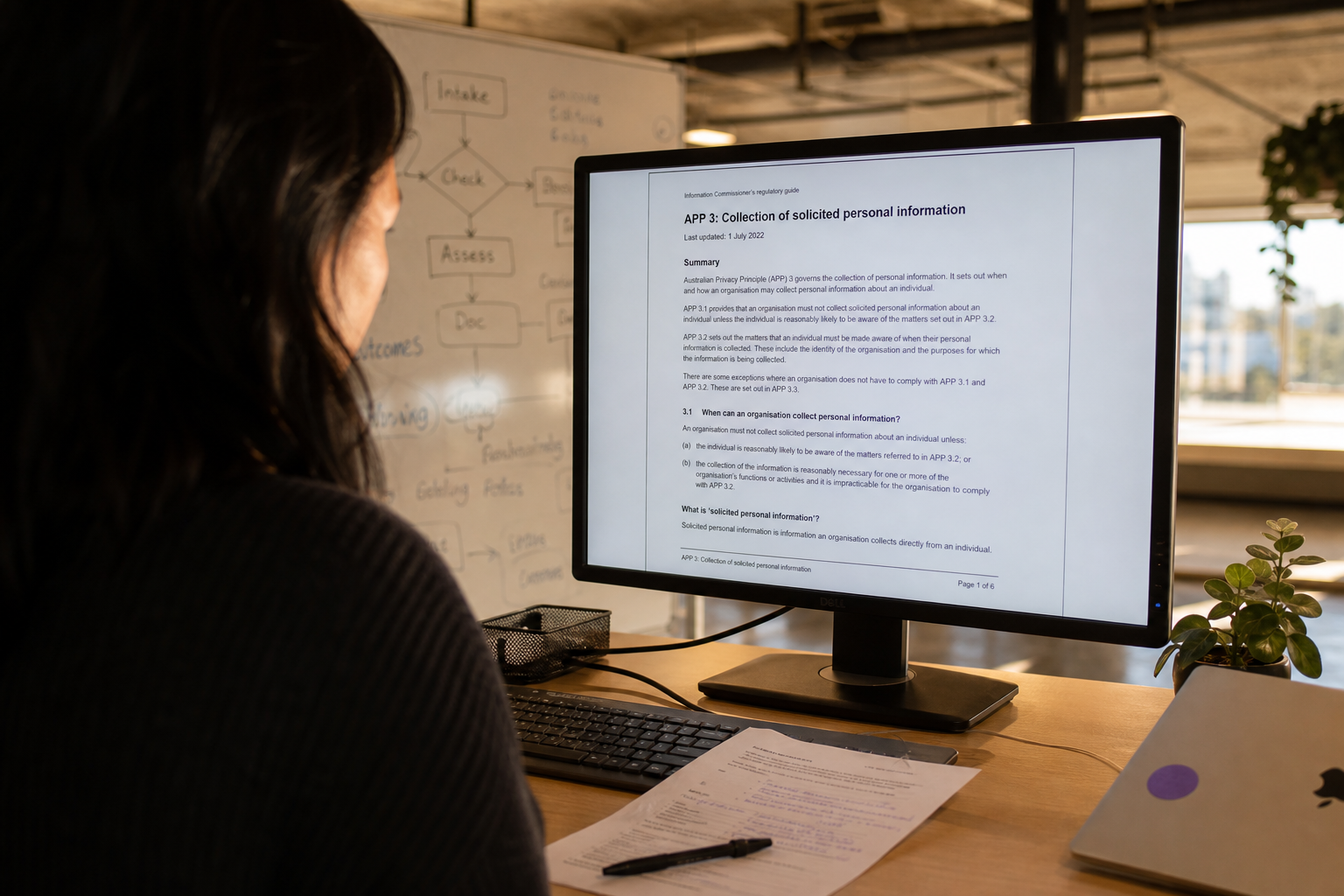

All organisations using AI for automated decision-making should prepare for Privacy Act obligations around algorithmic transparency and individual rights to explanation.

Documentation Standards should include model development records, training data provenance, validation testing results, and ongoing performance monitoring. Keep detailed logs of who approved what decisions and when.

Regular Review Cycles ensure governance keeps pace with system changes. Industry best practices suggest more frequent reviews for higher-risk systems, with annual reviews typically sufficient for lower-risk applications. Include both technical performance and ethical impact assessments.

Practical Governance Steps for Mid-Market Companies

Start with an AI inventory. Many companies don't fully know where AI appears in their systems. This includes obvious applications like recommendation engines, but also embedded AI in SaaS tools, marketing platforms, and development tools.

Establish Clear Roles and Responsibilities without creating bureaucracy. Designate an AI governance lead, but avoid creating a new department unless AI is core to your business. Often this role fits naturally with existing risk, compliance, or technology leadership.

Create Decision Gates in your development process. Before deploying any AI system, require sign-offs addressing data quality, bias testing, performance validation, and legal compliance. Make this part of your existing change management process.

Implement Continuous Monitoring for production AI systems. Models drift over time as real-world data changes. Set up alerts for performance degradation, unusual outputs, or bias indicators. This monitoring should integrate with existing operational dashboards.

Build Incident Response Procedures specific to AI failures. When an AI system behaves unexpectedly, you need clear procedures for assessment, containment, communication, and remediation. This differs from traditional system outages because AI failures can have discriminatory or reputational impacts.

Building Internal Capabilities vs External Support

Most mid-market companies lack deep AI governance expertise internally. Building this capability requires understanding both technical AI systems and regulatory requirements across multiple domains.

Internal capabilities to develop include basic AI literacy for leadership teams, risk assessment skills, and integration with existing governance processes. Your legal and compliance teams need to understand AI systems well enough to assess their implications.

External support becomes valuable for specialised areas like bias testing, model validation, and regulatory compliance assessment. Many organisations find success combining internal governance leadership with external technical expertise for specific projects.

Consider starting with an AI readiness assessment to understand your current state and identify gaps. This helps prioritise where to build internal capability versus where to seek external support.

Implementation Roadmap

Phase 1: Foundation (Months 1-3)

- Complete AI system inventory

- Establish governance team and clear responsibilities

- Develop initial risk classification framework

- Create basic documentation templates

Phase 2: Framework Development (Months 4-6)

- Build detailed governance policies and procedures

- Implement decision gates in development processes

- Establish monitoring and review cycles

- Train teams on governance requirements

Phase 3: Continuous Improvement (Months 7-12)

- Refine processes based on operational experience

- Expand monitoring capabilities

- Build deeper internal expertise

- Prepare for evolving regulatory requirements

The Business Case for AI Governance

Proper AI governance isn't just about compliance — it's about sustainable competitive advantage. Companies with strong governance frameworks can deploy AI systems faster and with more confidence. They avoid costly mistakes that come from inadequate testing or oversight.

Governance also becomes a differentiator when competing for customers, particularly in regulated industries where trust is paramount. Demonstrating responsible AI practices can accelerate sales cycles and reduce procurement friction.

Getting Started

AI governance might seem daunting, but it doesn't require perfect solutions from day one. Start with your highest-risk AI systems and build governance capabilities iteratively.

Focus on integrating AI governance with existing processes rather than creating parallel bureaucracy. Most mid-market companies already have risk management, change control, and compliance processes that can be extended to cover AI systems.

The key is starting now. Regulatory requirements will only increase, and building governance capabilities takes time. Companies that start early will be better positioned as requirements evolve.

Our AI product strategy and AI engineering teams help Australian companies build responsible AI systems with proper governance from the start. We can also support existing AI implementations through governance assessments and remediation.

Ready to build a robust AI governance framework for your organisation? Get in touch to discuss your specific requirements and develop a practical implementation plan.

For more insights on implementing AI responsibly, explore our application modernisation capabilities and browse more insights on AI strategy and implementation.

Aisha Reddy

Head of AI Strategy at Horizon Labs. Runs the AI Readiness Assessments and advises mid-market boards on where AI investment actually pays back. Former management consultant who got tired of slide decks; now ships AI that delivers measurable outcomes. Writes about strategy, governance, and how to evaluate AI consultancies without being sold to.