AI in Australian Healthcare: Clinical Support and Administration

AI in Australian healthcare augments clinical expertise and streamlines administration while maintaining regulatory compliance. Success requires structured implementation addressing technical, clinical, and TGA regulatory requirements.

AI in Australian Healthcare: Clinical Decision Support and Administrative Efficiency

AI in Australian healthcare is creating measurable value by augmenting clinical expertise and streamlining administrative workflows — not replacing the human judgment that defines quality healthcare. Modern healthcare AI systems support diagnostic decisions, predict patient deterioration, and automate routine administrative tasks while maintaining clinical oversight and regulatory compliance.

The opportunity is significant for Australian healthcare organisations facing increasing patient loads, administrative burden, and pressure to improve outcomes while managing costs. AI technologies like natural language processing, predictive analytics, and computer vision can address these challenges when implemented with proper regulatory compliance and clinical integration.

Clinical Decision Support: Augmenting Medical Expertise

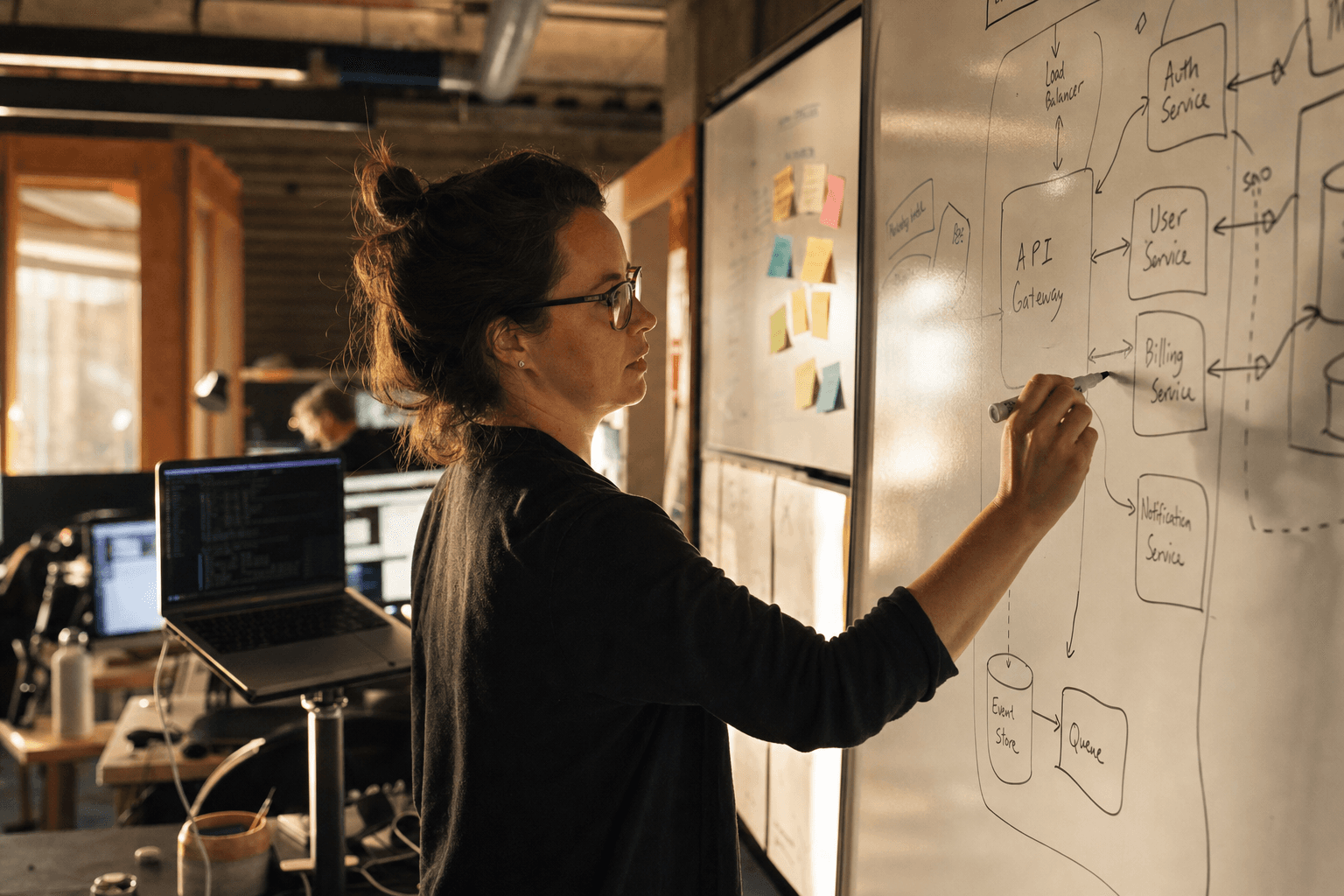

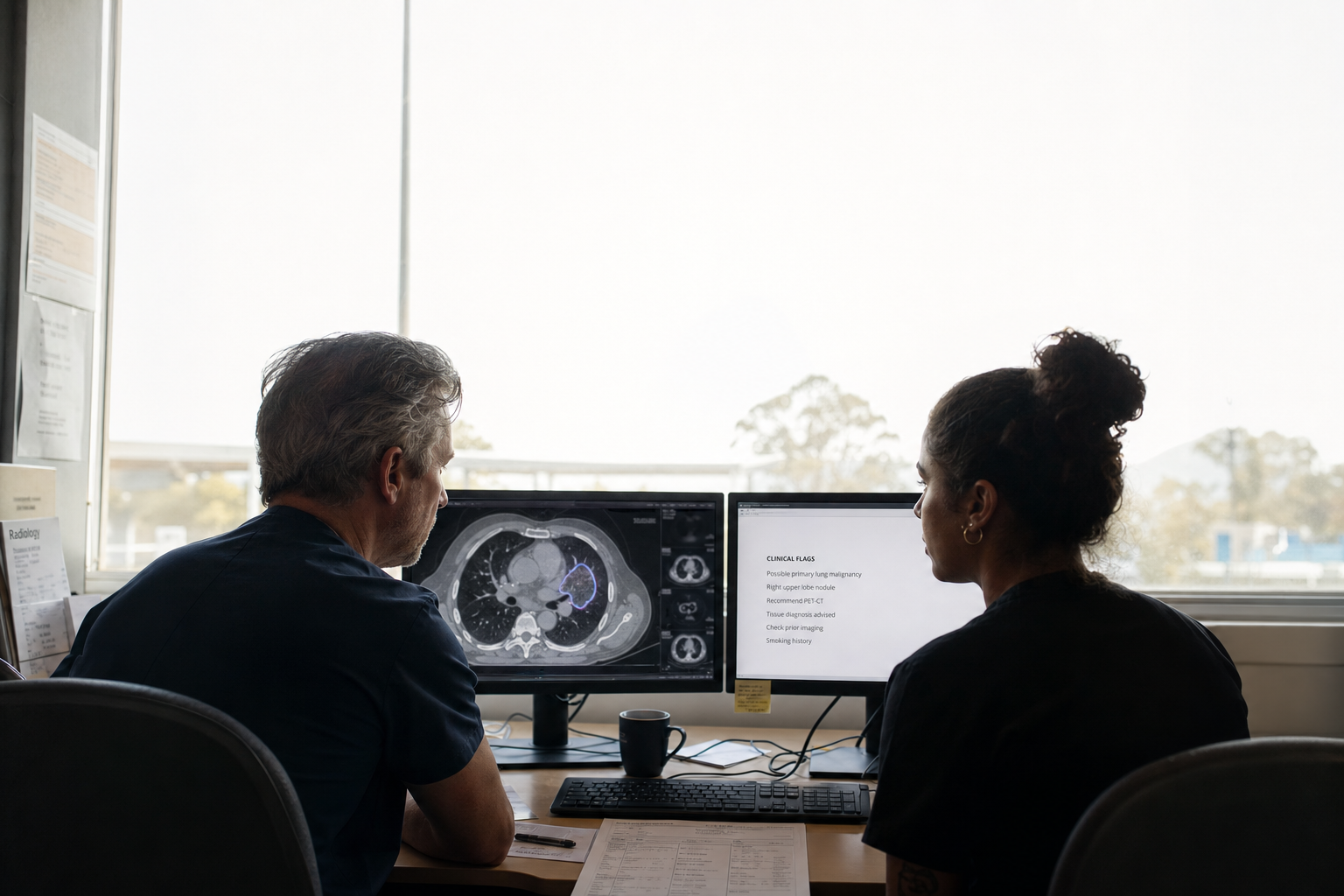

Clinical decision support systems use AI to analyse patient data and provide evidence-based recommendations to healthcare professionals. These systems process clinical data — lab results, imaging studies, patient history, and real-time monitoring data — to identify patterns that support clinical decision-making.

AI-powered diagnostic imaging is seeing adoption in Australian hospitals. Computer vision algorithms can detect diabetic retinopathy in eye scans, identify suspicious lesions in radiology images, and flag urgent cases for immediate review. These systems don't replace radiologists but help them prioritise cases and identify subtle abnormalities that warrant closer examination.

Predictive analytics models analyse patient vital signs, lab values, and clinical notes to identify patients at risk of sepsis, cardiac events, or clinical deterioration. Early warning systems alert nursing staff and physicians when intervention might prevent adverse outcomes. The Royal Melbourne Hospital, for example, has implemented early warning systems that analyse patient data streams to predict deterioration risk.

Drug interaction checkers and dosage optimisation tools cross-reference patient medications, allergies, and conditions to flag potential adverse reactions. These systems are particularly valuable in complex cases involving multiple medications or patients with multiple comorbidities — situations where manual cross-checking becomes increasingly difficult.

From our experience implementing healthcare AI systems, the most successful deployments focus on specific clinical workflows rather than attempting broad automation. Systems that integrate seamlessly with existing clinical information systems and provide recommendations at the point of care see higher adoption rates among clinicians.

Administrative Automation: Reducing Clinical Burden

Administrative tasks consume significant clinical time that could be spent on patient care. AI automation can handle routine administrative workflows while maintaining accuracy and compliance with Australian healthcare regulations.

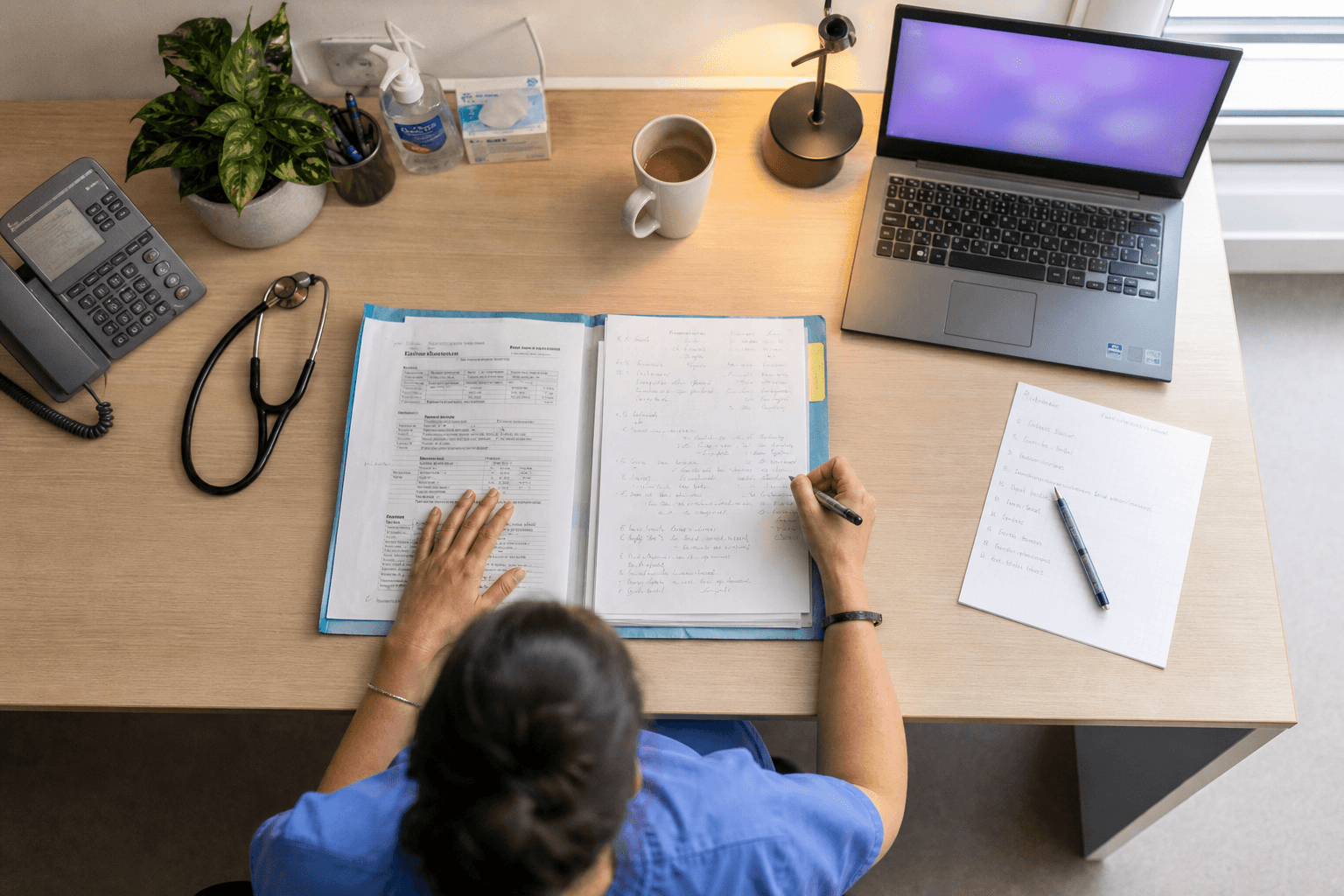

Natural language processing systems can automatically extract key information from clinical notes, populate discharge summaries, and generate referral letters. Voice recognition technology allows clinicians to dictate notes that are automatically transcribed and formatted according to clinical standards. This technology has matured significantly, with accuracy rates approaching human-level transcription in controlled clinical environments.

Appointment scheduling systems use predictive models to optimise clinic schedules, reduce no-shows, and match patient needs with appropriate appointment types and durations. These systems can automatically reschedule appointments based on patient preferences and clinical priorities, reducing the administrative load on reception staff.

Billing and coding automation reviews clinical documentation to suggest appropriate diagnostic codes and flag potential billing errors. This reduces administrative workload while ensuring compliance with Medicare and private insurance requirements. However, human oversight remains essential for complex cases and final code assignment.

Patient communication platforms can handle routine inquiries, medication reminders, and appointment confirmations through chatbots and automated messaging systems. These tools free up staff time while maintaining patient engagement, though they must be designed to escalate complex queries to human staff appropriately.

The key insight from our healthcare AI implementations is that administrative automation succeeds when it eliminates repetitive tasks rather than replacing human judgment. The most effective systems work behind the scenes to prepare information and flag issues, leaving final decisions to healthcare professionals.

TGA Regulatory Framework for Healthcare AI

The Therapeutic Goods Administration (TGA) regulates medical devices in Australia, including AI-powered software that makes clinical recommendations or influences patient care decisions. Understanding TGA requirements is essential for healthcare AI implementation and varies significantly based on the intended use and risk profile of the system.

According to TGA guidance documents, AI software that provides diagnostic information or treatment recommendations typically qualifies as a medical device under the Therapeutic Goods Act 1989. This includes clinical decision support systems that analyse patient data and provide specific recommendations to clinicians, but excludes systems that merely organise or display data without analysis.

The TGA classification system ranges from Class I (lowest risk) to Class III (highest risk). Low-risk AI tools for administrative tasks — such as appointment scheduling or basic data entry — may not require TGA registration. However, high-risk systems that influence critical clinical decisions require extensive validation and ongoing monitoring under the TGA's regulatory framework.

For Class II and III medical device software, the TGA requires clinical evidence demonstrating safety and effectiveness. This typically includes validation studies showing the AI system performs as intended in Australian healthcare settings, with particular attention to local population characteristics and clinical practices that may differ from international validation studies.

Post-market surveillance is mandatory for registered devices under TGA requirements. This includes ongoing monitoring of AI system performance, adverse event reporting, and regular safety updates. For AI systems, this surveillance must account for potential algorithm drift and changing performance characteristics over time.

Quality management systems must meet ISO 13485 standards, with additional requirements for AI systems including algorithm validation documentation, bias testing protocols, and performance monitoring capabilities. The TGA has published specific guidance on software as medical devices that healthcare organisations should review before implementing AI systems.

Data Privacy and Security in Healthcare AI

Healthcare AI systems process sensitive patient information, making data privacy and security paramount under Australian privacy legislation. Healthcare organisations must comply with privacy requirements while enabling AI innovation — a balance that requires careful technical and legal consideration.

The Privacy Act 1988 and the Privacy Amendment (Notifiable Data Breaches) scheme govern how healthcare organisations collect, use, and disclose personal health information. AI systems must implement privacy-by-design principles, ensuring patient data is protected throughout the AI lifecycle from initial collection through model training, deployment, and ongoing operation.

Data de-identification is crucial for AI training while protecting patient privacy. Advanced techniques like differential privacy can enable AI model development while providing mathematical guarantees about individual privacy protection. However, healthcare organisations must carefully evaluate whether de-identification techniques provide sufficient protection given the sensitive nature of health data and the potential for re-identification through data linking.

Cloud deployment of healthcare AI systems raises additional privacy considerations. While cloud services can provide necessary computational resources for AI workloads, healthcare organisations must ensure cloud providers meet Australian privacy requirements and provide appropriate data sovereignty guarantees. This often involves detailed data processing agreements and regular security audits.

The Australian Digital Health Agency provides guidance on data governance for digital health systems, including AI applications. Healthcare organisations should review this guidance alongside their obligations under state and territory health privacy legislation, which may impose additional requirements beyond the Privacy Act.

Implementation Strategy for Healthcare AI

Successful healthcare AI implementation requires a structured approach that addresses technical, clinical, and regulatory requirements while managing change across the organisation.

Start with pilot projects that address specific clinical or administrative pain points rather than attempting broad AI transformation. Focus on workflows where AI can provide clear value — such as automating routine documentation or flagging high-risk patients — and where clinical staff can easily integrate AI recommendations into existing practices.

Clinical engagement from the beginning is essential. Healthcare AI systems that are developed without input from end-users often fail to achieve adoption, regardless of their technical sophistication. Regular feedback sessions with clinicians during development help ensure the AI system addresses real workflow challenges and provides recommendations in formats that support clinical decision-making.

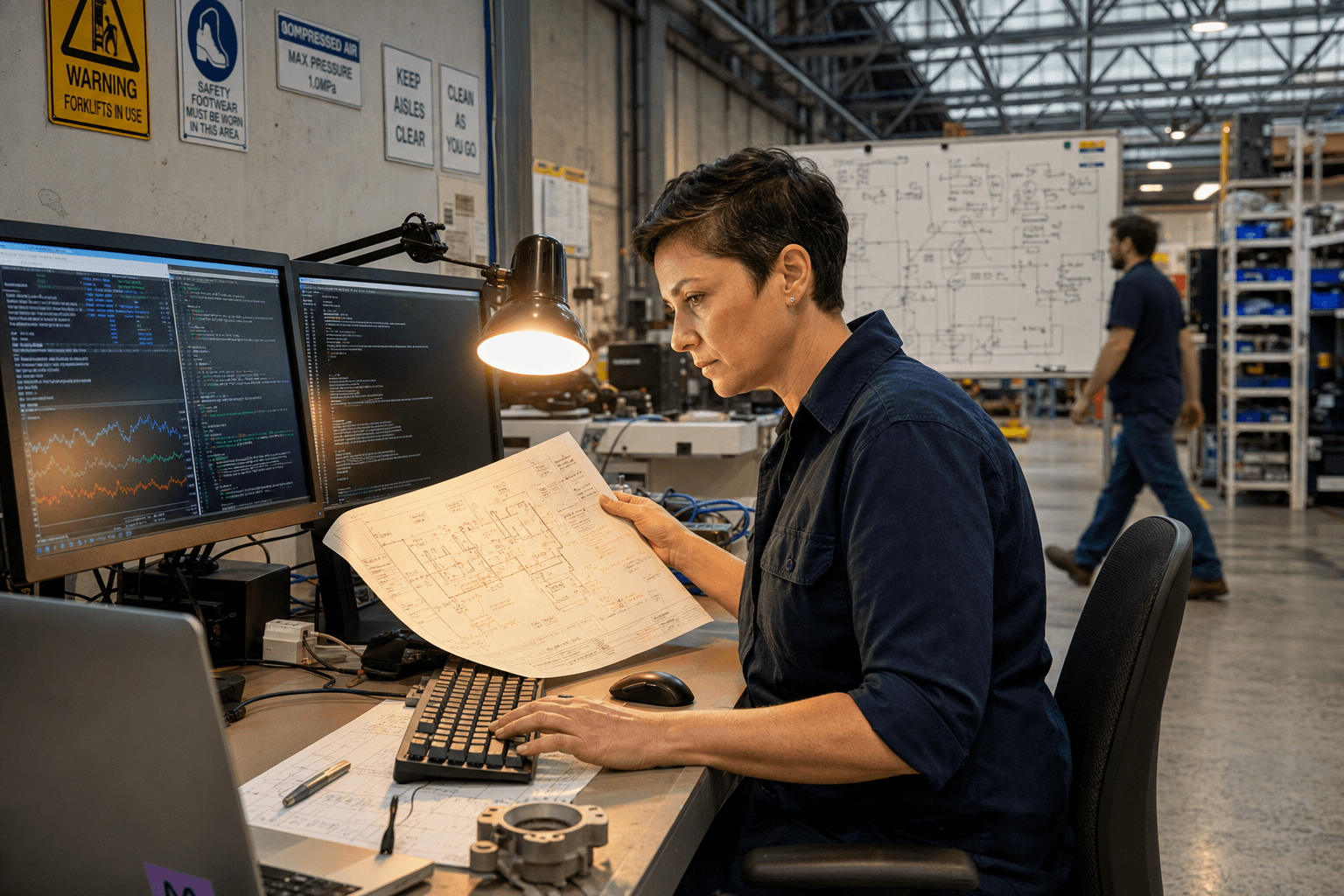

Data infrastructure assessment should precede AI implementation. Many healthcare organisations have data quality issues, integration challenges, or security gaps that must be addressed before AI systems can operate effectively. Investment in data infrastructure — including data governance processes, integration platforms, and security controls — often provides returns beyond the immediate AI use case.

Regulatory compliance planning must begin early in the development process. Understanding whether your AI system qualifies as a medical device under TGA regulations affects development timelines, validation requirements, and ongoing operational obligations. Early consultation with regulatory experts can prevent costly delays or redesign requirements later in the project.

Change management becomes critical for AI systems that modify clinical workflows. Training programs, ongoing support, and clear escalation paths help healthcare professionals integrate AI tools effectively. Successful implementations typically include clinical champions who can provide peer-to-peer training and address concerns as they arise.

Measuring Healthcare AI Impact

Measuring the impact of healthcare AI requires metrics that capture both clinical outcomes and operational efficiency while accounting for the complex, multi-stakeholder nature of healthcare delivery.

Clinical outcome metrics should align with existing quality indicators where possible. For diagnostic support systems, relevant metrics might include time to diagnosis, diagnostic accuracy rates, or inter-observer variability. For predictive systems, consider sensitivity and specificity rates, early warning effectiveness, or patient outcomes following AI-flagged interventions.

Operational efficiency metrics capture the administrative value of AI systems. Time savings from automated documentation, reduction in manual coding errors, or improved appointment scheduling efficiency provide measurable operational returns. These metrics often demonstrate immediate value while clinical outcome improvements may take longer to measure reliably.

Clinician satisfaction surveys provide insight into AI system usability and workflow integration. High-performing AI systems typically show sustained or improved clinician satisfaction scores, indicating the AI is supporting rather than hindering clinical work. Low satisfaction scores often predict poor long-term adoption regardless of technical performance.

Patient outcome tracking requires longer measurement periods and careful control for confounding factors. Healthcare organisations should establish baseline measurements before AI implementation and track relevant outcomes over sufficient time periods to detect meaningful changes. Collaboration with clinical research teams can help design appropriate measurement frameworks.

Regulatory reporting requirements may dictate specific metrics for registered medical devices. The TGA requires post-market surveillance data that demonstrates ongoing safety and effectiveness. Planning these measurements from the beginning of implementation ensures compliance while providing valuable insights into AI system performance.

From our experience with healthcare AI implementations, the most successful projects establish clear measurement frameworks before deployment and commit to regular performance reviews with clinical and administrative stakeholders. This approach enables continuous improvement while demonstrating value to organisational leadership.

Healthcare AI represents a significant opportunity for Australian healthcare organisations to improve clinical outcomes while managing operational pressures. Success requires careful attention to regulatory requirements, clinical workflow integration, and ongoing performance measurement.

We help healthcare organisations navigate the technical and regulatory complexity of AI implementation — from AI product strategy through AI engineering and ongoing data science and analytics. If you're evaluating AI opportunities in healthcare, get in touch to discuss your specific requirements and regulatory context.

Explore more insights on AI implementation strategies and regulatory considerations for Australian healthcare organisations.

Chris Kerr

Founder of Horizon Labs. Twenty years building production software for Australian mid-market businesses, the last seven focused on putting AI into systems that operate at 3am without anyone watching. Writes about strategy, fractional CTO work, and the operational discipline that separates AI demos from AI products.