dbt for Mid-Market: Data Transformation Without Enterprise Costs

dbt brings enterprise-grade data transformation capabilities to mid-market Australian companies without enterprise costs. Learn how to implement modern data pipelines using SQL and software engineering best practices.

dbt for Mid-Market: Modern Data Transformation Without Enterprise Costs

Data transformation shouldn't require a six-figure Informatica license or a team of ETL specialists. dbt (data build tool) brings enterprise-grade data transformation capabilities to mid-market companies at a fraction of traditional costs. For Australian businesses with growing data needs but realistic budgets, dbt offers a path to modern analytics without the traditional enterprise overhead.

What is dbt and Why Should Mid-Market Companies Care?

dbt is an open-source tool that transforms raw data in your warehouse using familiar SQL and software engineering best practices. Unlike traditional ETL tools that require proprietary platforms and specialist skills, dbt runs transformations directly in your existing data warehouse using SQL you already know.

For mid-market companies, this means you can build robust data pipelines without hiring expensive ETL developers or licensing complex enterprise software. Your analysts can write transformations in SQL, version control changes like code, and deploy updates safely to production.

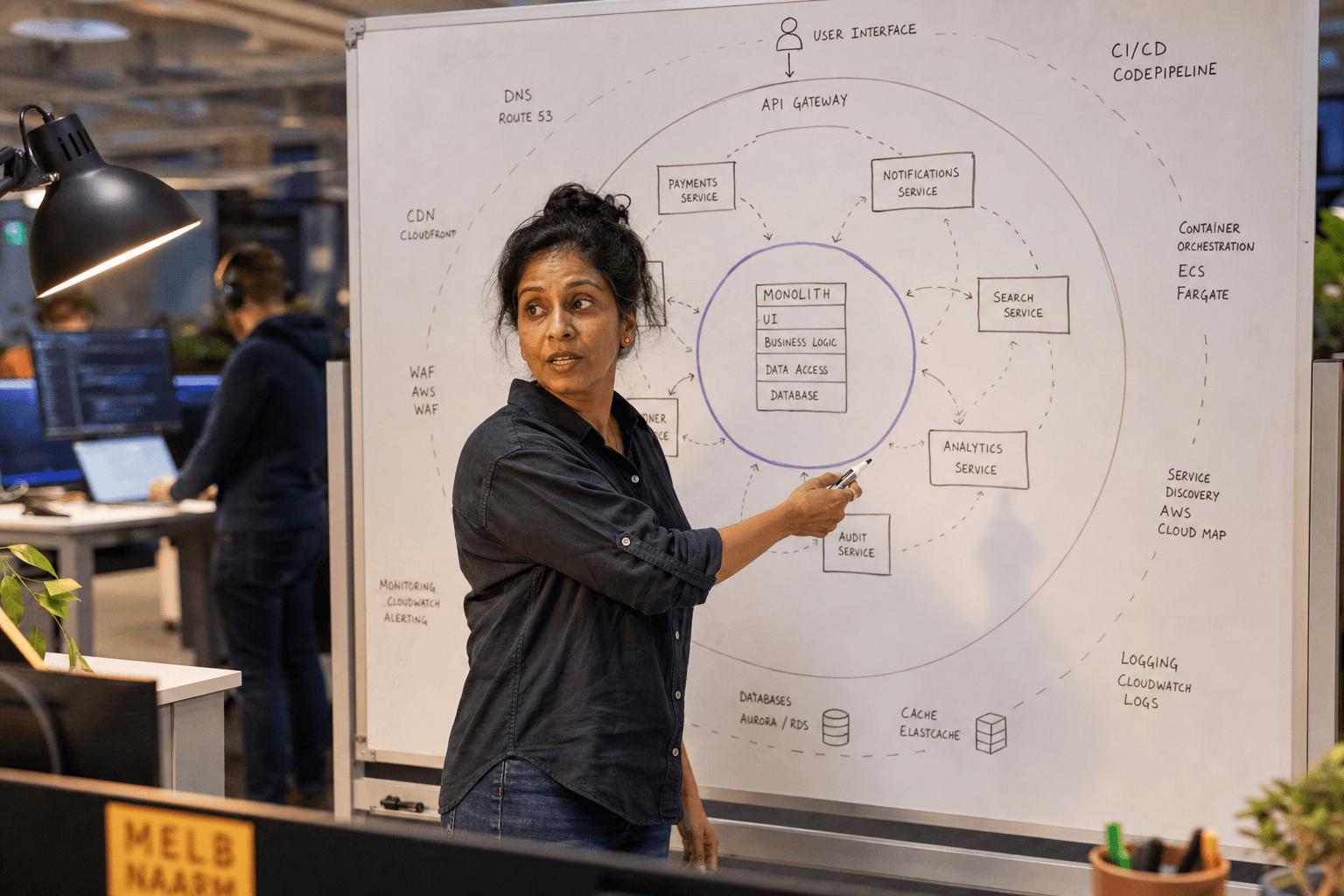

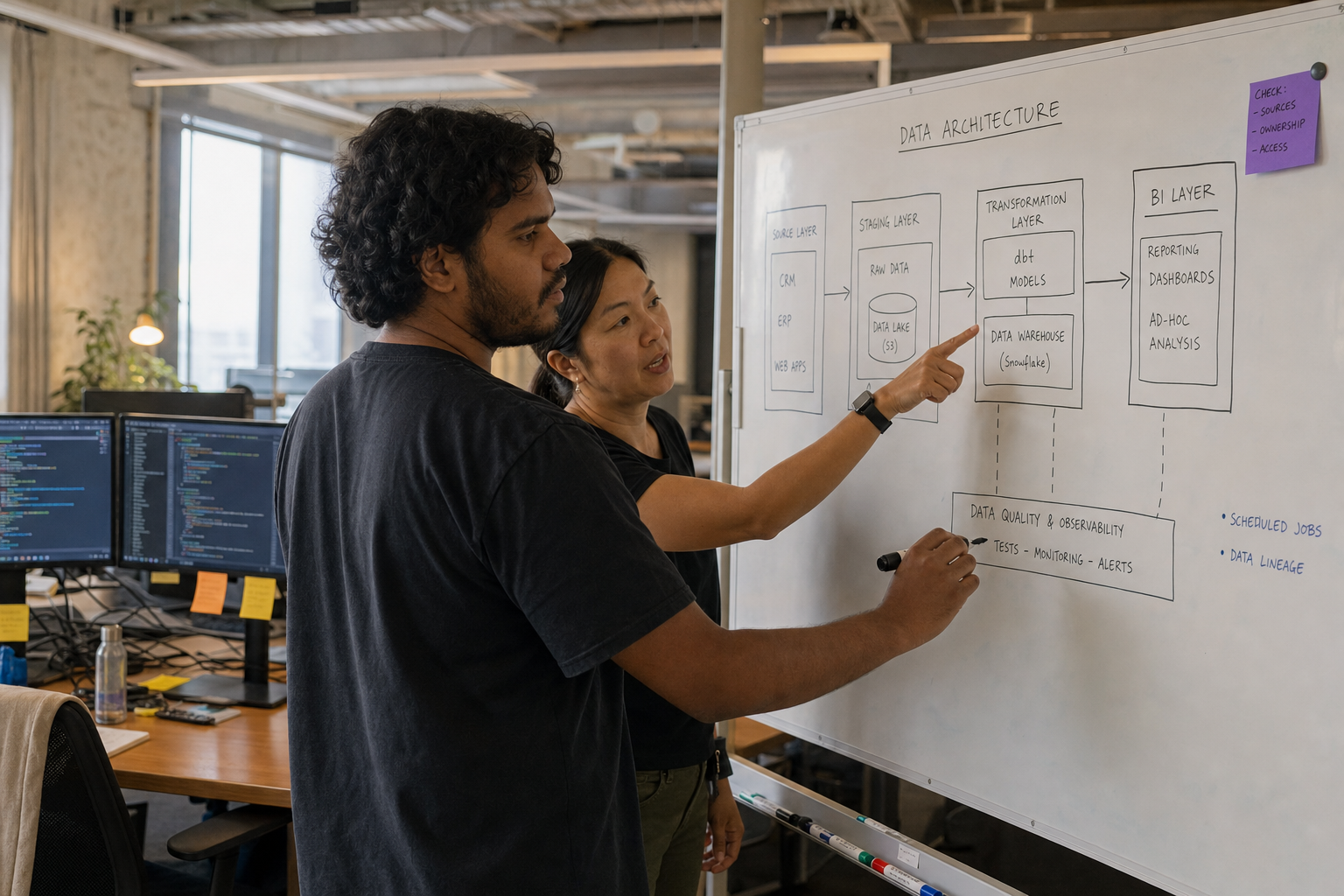

The Mid-Market Data Stack: Where dbt Fits

A typical mid-market data stack includes several components working together. Your data warehouse might be Snowflake, BigQuery, or Redshift. For transformation, dbt Core is completely free, while dbt Cloud offers additional features for growing teams. Orchestration can be handled by open-source tools like Airflow or Dagster, or through dbt Cloud's built-in scheduler. Business intelligence tools like Looker, Tableau, or Power BI sit on top of your transformed data.

dbt sits between your raw data and your BI tools, transforming messy source data into clean, business-ready tables. This architecture lets you leverage best-of-breed tools without vendor lock-in or enterprise licensing fees. Australian companies typically find this approach delivers significant cost savings compared to traditional enterprise data platforms.

Getting Started with dbt: A Practical Approach

Start with dbt Core (free) and a simple transformation workflow:

- Install dbt locally: Use pip or conda to install dbt with your warehouse adapter

- Connect to your warehouse: Configure profiles.yml with your database credentials

- Create your first model: Write a SQL SELECT statement as a .sql file

- Run dbt: Execute

dbt runto materialise your transformations

Your first model might clean customer data:

-- models/staging/stg_customers.sql

SELECT

customer_id,

TRIM(LOWER(email)) as email,

COALESCE(first_name, 'Unknown') as first_name,

created_at::date as signup_date

FROM {{ source('app_db', 'customers') }}

WHERE email IS NOT NULL

This approach gives you immediate value while establishing the foundation for more complex transformations.

Best Practices for Mid-Market dbt Implementation

Start with staging models: Clean and standardise your source data first. Create one staging model per source table, handling basic transformations like data type casting and column renaming.

Use the ref() function: Reference other models using {{ ref('model_name') }} rather than hard-coding table names. This creates dependency graphs and ensures models run in the correct order.

Implement data quality tests: Add simple tests to catch data issues early. Test for uniqueness, not-null values, and referential integrity:

models:

- name: stg_customers

columns:

- name: customer_id

tests:

- unique

- not_null

Version control everything: Store your dbt project in Git from day one. This enables collaboration, rollbacks, and deployment automation as you scale.

When dbt Might Be Overkill

dbt isn't always the right choice for mid-market companies:

- Simple reporting needs: If you just need basic aggregations from a single source, your BI tool might be sufficient

- Real-time requirements: dbt is designed for batch processing, not streaming transformations

- Very small data volumes: Minimal data might not justify the setup overhead

- No SQL skills: If your team can't write SQL, the learning curve might be steep

For these scenarios, consider simpler alternatives or invest in SQL training before adopting dbt. Our data infrastructure team can help assess whether dbt fits your specific requirements.

dbt Cloud vs dbt Core: Choosing the Right Option

dbt Core is free and runs anywhere you can install Python. It's perfect for getting started and works well for teams comfortable with command-line tools and CI/CD setup.

dbt Cloud adds a web-based IDE, job scheduling, and collaboration features. For mid-market teams, it provides professional workflow management while remaining significantly more cost-effective than traditional enterprise alternatives. The hosted environment eliminates infrastructure management overhead.

Building Your dbt Implementation Roadmap

Phase 1 (Weeks 1-4): Set up dbt Core locally, connect to your warehouse, and create staging models for your most critical data sources. Focus on getting familiar with dbt's concepts and workflow.

Phase 2 (Weeks 5-8): Build mart models for your key business metrics. Add data quality tests and documentation. Establish a Git workflow for version control.

Phase 3 (Weeks 9-12): Implement CI/CD for automated testing and deployment. Consider upgrading to dbt Cloud if manual orchestration becomes a bottleneck.

This phased approach lets you prove value quickly while building towards a production-ready data transformation pipeline.

Common Implementation Pitfalls to Avoid

Over-engineering early: Don't try to model every possible business scenario in your first implementation. Start with your most important metrics and iterate.

Ignoring performance: Large transformations can be expensive in cloud warehouses. Use incremental models and partitioning for high-volume tables.

Skipping documentation: Future team members (including yourself) will thank you for clear model documentation and descriptions.

Lack of testing strategy: Production data issues are expensive to fix. Implement comprehensive testing from the start.

The Australian Context: Data Sovereignty and Compliance

Australian companies must consider data sovereignty requirements when choosing data tools. dbt transforms data in-place within your chosen warehouse, which can help maintain data residency requirements. Whether you're using AWS in Sydney, Google Cloud in Melbourne, or Azure in Australia East, your data stays within Australian borders.

For companies in regulated industries like finance or healthcare, dbt's audit trail and version control capabilities support compliance requirements. Every transformation is documented, versioned, and reproducible.

Integration with Modern Analytics Platforms

dbt integrates seamlessly with the analytics tools Australian companies already use. Whether you're running Tableau, Power BI, or modern tools like Looker, dbt creates the clean, well-structured data these platforms need.

The tool also works well with cloud-native Australian services. If you're using AWS Lake Formation, Google Analytics Intelligence, or Azure Synapse, dbt can be part of your end-to-end data pipeline.

Building Internal Capability vs External Support

Many Australian mid-market companies face a choice: build dbt expertise internally or engage external support. Building internal capability takes time but creates long-term ownership. External support accelerates implementation but requires knowledge transfer.

A hybrid approach often works best: engage experts for initial setup and best practices, then build internal capability for ongoing maintenance. This approach reduces time-to-value while ensuring your team can maintain and extend the solution.

Our ai engineering and data infrastructure teams regularly help Australian companies implement dbt as part of broader data modernisation initiatives.

Moving Forward: From Implementation to Value

Successful dbt implementations focus on business outcomes, not just technical implementation. Start by identifying your most critical business questions that better data could answer. Build transformations that directly support these decisions.

Measure success through business metrics: faster time-to-insight, improved data quality, or reduced manual reporting effort. Technical metrics like test coverage and model performance matter, but business value drives continued investment.

Consider dbt as part of a broader data strategy that might include application modernisation or ai product strategy. Modern data transformation often enables AI initiatives that wouldn't be possible with legacy ETL approaches.

For more insights on building modern data capabilities, explore our data science and analytics resources or get in touch to discuss your specific data transformation challenges.

James Liu

Lead Data Engineer at Horizon Labs. Builds the data plumbing AI runs on — dbt pipelines, vector stores, feature platforms. Twelve years across Australian financial services, mining, and logistics. Believes data quality work is the highest-leverage AI investment most teams underspend on, and writes about why.